Think back to the early days of the web. It wasn’t the speed of a single connection that changed the world; it was the moment the wires finally linked up and the protocols became invisible. Quantum computing is hitting that exact same milestone right now. We’ve spent nearly a decade obsessed with the vanity of qubit counts—like counting horsepower in a car that has no roads. But in 2026, the “horsepower” era is over. We’ve finally started building the roads.

The world didn’t tilt because a single router got faster; it shifted the moment the wires finally reached across the silence and the protocols dissolved into the background. In the labs, the struggle has moved past the frantic effort to keep qubits alive for a mere heartbeat. Now, the mission is orchestration—teaching these fragile processors to sync in real-time with the GPU power waiting across the room. It’s a gritty, high-stakes transition from “maybe it works” to “here is the infrastructure.”

We are seeing the birth of the hybrid era, where quantum processors are no longer isolated science experiments but integrated accelerators tucked inside standard data centers. This guide isn’t about distant promises or “supremacy” headlines. It’s a map of the convergence happening under our feet.

From logical qubits that have finally learned to self-correct to the live quantum signaling experiments currently turning Berlin’s existing fiber into a playground for secure traffic, the 17 advancements below represent the moment quantum became a pillar of global strategy. Let’s look at how the stack is actually coming together.

17 Key Quantum Computing Breakthroughs and 2026 Quantum Networking Milestones

Reliability and Fault-Tolerant Progress

- Quantinuum’s Helios launch paired high-fidelity with Guppy-driven real-time control for dynamic circuits. Helios wasn’t built for a one-off demo; it was engineered for the grueling demands of sustained, repeatable performance.

- Google’s push toward error correction is anchored by dynamic surface code demonstrations. These trials do more than reduce hardware stress; they drastically expand the complexity and reach of modern quantum operations.

- IBM has charted a definitive course toward fault tolerance, setting explicit milestones for modular quantum operations and scale through its 2029 roadmap.

- Microsoft is betting everything on best-performing logical qubits, treating them as the only metric that truly matters for running algorithms that actually solve problems.

- Microsoft and Atom Computing reported entangling 24 logical qubits in a neutral-atom system positioned for reliability work.

- QuEra described algorithmic fault tolerance as a way to cut runtime overhead rather than just adding more hardware.

Quantum Networking and Signaling Milestones

- Device-independent quantum key distribution reached 100 km in a peer-reviewed result on DI-QKD over 100 km with single-atom nodes.

- A repeater-grade building block is captured in memory-to-memory entanglement that lasts long enough to be useful over 10 km of fiber.

- Microwave-to-optical bridging improved with low-energy-loss transducer designs aimed at connecting hardware that speaks different “signal languages.”

- The UK’s long-haul networking testbed includes measured key rates and loss budgets across the Cambridge–Bristol link.

Hybrid Integration and Commercial Ecosystems

- NVQLink’s rollout is supported by software primitives designed to fuse QPUs directly into GPU-accelerated supercomputing workflows.

- Quantum Machines formalized microsecond feedback loops through its real-time NVQLink control integration for hybrid error-correction cycles.

- Denmark’s QuNorth framing describes Magne as a Level 2 Logical-Qubit System with error correction at the center of the value proposition.

- Maryland’s Capital of Quantum Initiative is building an ecosystem where research, talent, and commercialization co-locate.

- IonQ and Cambridge set up a commercialization-focused innovation center designed to turn prototypes into deployable tools.

- Florida Atlantic’s Advantage2 purchase is confirmed by a $20M on-site deployment agreement that carries significant workforce implications.

- Photonic scaling depends on practical interconnect work, including the Xanadu–Corning Fiber Collaboration Announcement that targets optical loss and packaging constraints.

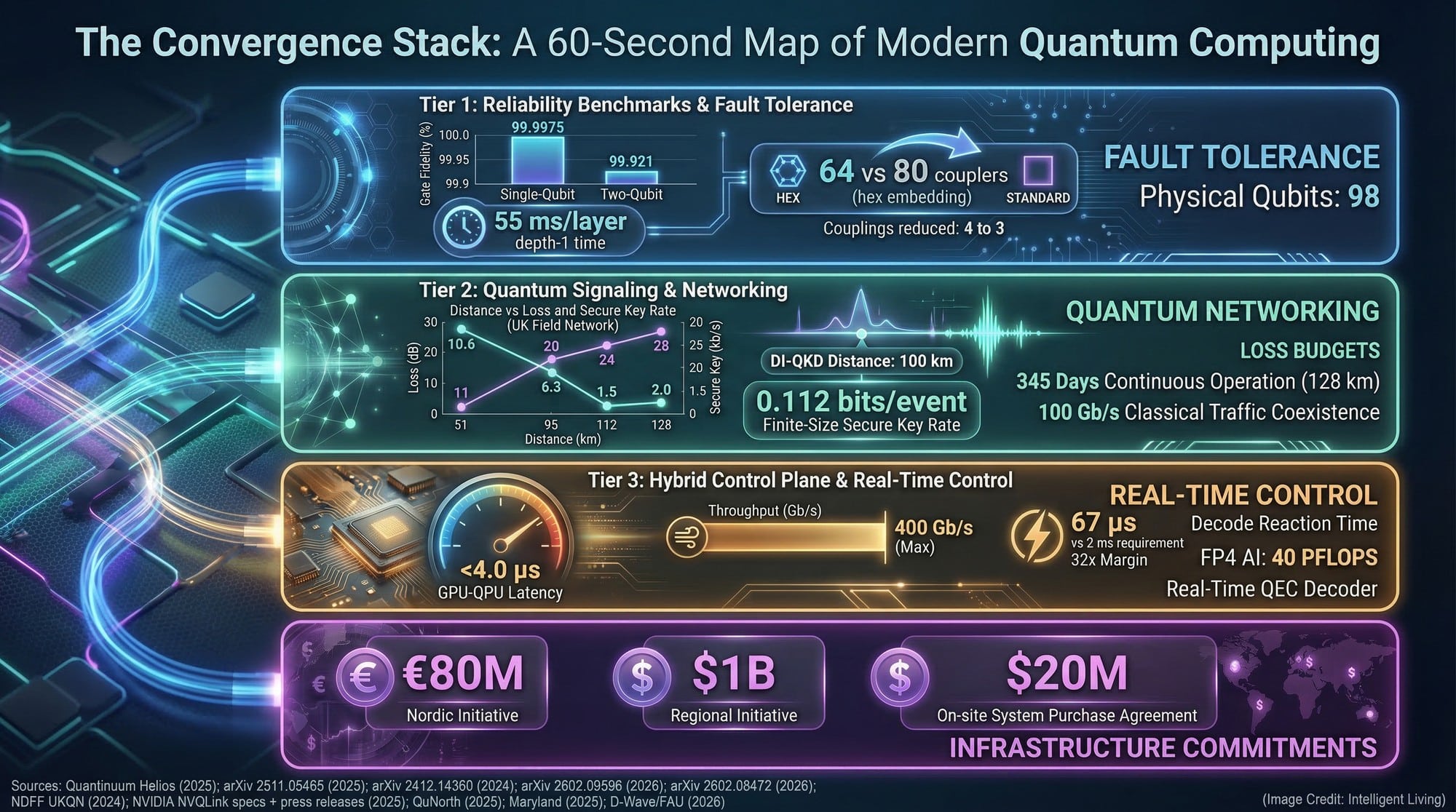

The Convergence Stack: A 60-Second Map of Modern Quantum Computing

Quantum progress in 2026 is best understood as a stack.

Layer 1: Logical Qubits and Fault Tolerance

A qubit is the basic unit of quantum information. A logical qubit acts as a shielded version of that unit, constructed from multiple physical counterparts to ensure that errors can be caught and corrected mid-flight. IBM’s blueprint for practical fault tolerance serves as the industry’s master plan for moving protected qubits out of theory and into scalable reality. This includes modularity and more efficient error correction as the essential path to scale.

Fault tolerance is the difference between a prototype that glitches and a tool that finishes the job. Noise is the constant enemy; without shielded qubits, quantum states dissolve before the math is done. The industry has effectively stopped counting raw physical qubits and started treating logical reliability as the only meaningful scoreboard.

Layer 2: Quantum Signaling and Communication

Think of quantum signaling as the backbone that allows these systems to speak to one another, sharing entanglement or swapping secure data across vast distances. This includes device-independent quantum key distribution, quantum repeaters, and photon-based networking.

A peer-reviewed Science result on device-independent quantum key distribution over 100 kilometers used single-atom nodes linked by long fiber. This milestone pulls ultra-secure key exchange out of the lab and into distances that look like real city-to-city infrastructure.

Telecom researchers are already mapping out the next decade, integrating quantum-safe terahertz communication roadmaps as a foundational infrastructure upgrade.

Security isn’t just about blocking hackers; it’s about future-proofing our history. Quantum-safe protocols ensure that data encrypted today remains unreadable to the machines of tomorrow, while signaling methods use the laws of physics themselves to unmask eavesdroppers in real-time.

Layer 3: The Hybrid Control Plane

Quantum processors cannot operate in isolation. They require classical systems for calibration, readout, and error correction. NVIDIA’s NVQLink interconnect for quantum and GPU computing is the bridge that fuses quantum processors with the sheer power of GPU supercomputing. This ensures the heavy classical work of decoding errors and steering hardware can run fast enough to matter.

This hybrid approach reflects a broader truth: Quantum will not replace GPUs. It will plug into them.

Layer 4: Infrastructure and Institutional Scaling

Governments and universities are building facilities and committing capital. In the U.S., Maryland’s Capital of Quantum Initiative frames quantum as a regional industry strategy rather than a distant academic bet.

This trend is reflected in Taiwan’s strategic quantum hardware bets, where workforce training and supply-chain resilience are treated as part of the same long game.

Investment at this level is the ultimate signal of intent. When governments commit capital on a regional scale, quantum ceases to be a laboratory experiment and becomes a pillar of national strategy.

The Core Breakthrough Layer: Fault Tolerance, Quantum Signaling, and GPU Control

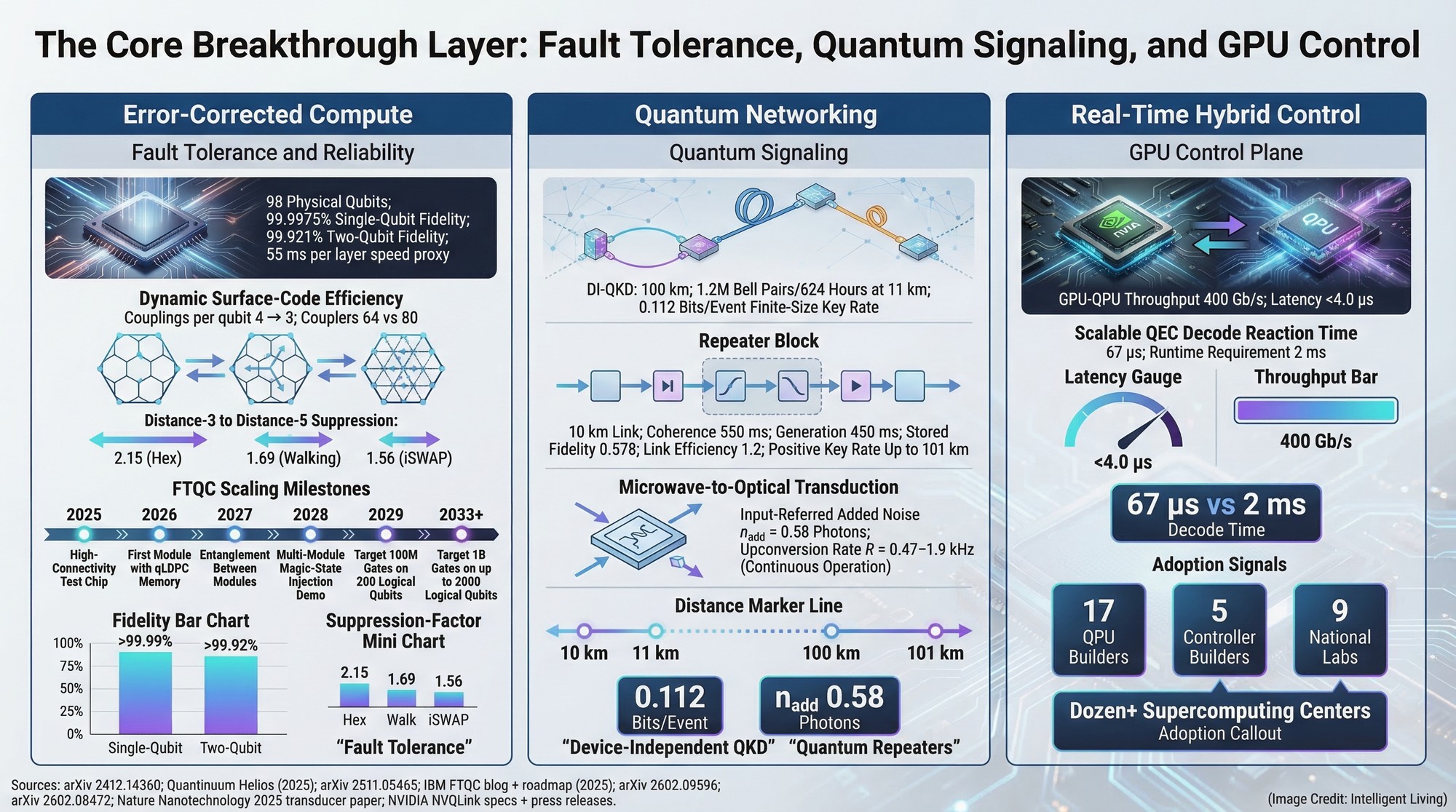

Reliability Wins: Fault-Tolerant Momentum

Helios and the Accuracy-First Shift

Quantinuum’s Helios system release emphasized improved accuracy and system integration rather than just qubit count, a subtle change that matters because reliability is the gatekeeper for any practical workload.

Dynamic Surface Codes and Smarter Error-Correction Circuits

Google’s work on dynamic surface codes isn’t just a physics tweak; it’s a structural overhaul of how we build error-correction circuits. By reducing the brute-force hardware complexity, engineering teams are finally moving past supremacy-era bragging rights and focusing on the quiet, practical reliability that businesses actually need.

Logical Qubits as a Practical Performance Metric

Microsoft has positioned reliability as a platform feature, including a report of creating 12 highly reliable logical qubits and running a hybrid chemistry workflow that ties error suppression to a real workload class.

Algorithmic Fault Tolerance and Runtime Overhead

QuEra’s team introduced algorithmic fault tolerance designed to cut runtime overhead, which matters because error correction is not only a physics problem. It is also a scheduling problem, a control problem, and a cost problem.

You can see the transition in the labs. It’s the moment a researcher stops cheering for a single clean run and starts trusting the hardware to work every single time—the day a science project finally feels like a piece of equipment.

Quantum Signaling Gets Real: The Communication Layer

Entanglement that Lasts Long Enough for Repeaters

A Nature study reported long-lived remote ion–ion entanglement over 10 km of fiber, a milestone that targets one of the hardest networking bottlenecks: keeping entanglement alive long enough to be useful for repeater-style architectures.

Bridging Microwave QPUs to Optical Fiber

Hardware also has to translate between worlds. Caltech described low-noise transducers that bridge microwave and optical qubits, which matters because many leading quantum processors operate at microwave frequencies, while long-distance networks prefer optical fiber.

National Testbeds and Measured Loss Budgets

The UK’s long-distance demonstration helped make the idea less abstract, showing quantum-secure data transmission over a 410-kilometer fiber link. A national testbed layer also exists. The UK Quantum Network Testbed Description explains how metro-scale networks can be stitched together with long-haul fiber to evaluate quantum-safe and conventional communication as an integrated system.

What this Means for Everyday Privacy

Will this change your personal privacy by next week? No. But for the first time, the engineering roadmap is readable. We are no longer guessing at the distance; we are measuring loss budgets and connecting real-world endpoints in a way that feels like the early days of high-speed internet.

The Hybrid Era: GPUs Become the Control Plane

Co-locating QPUs with GPU Supercomputers

NVIDIA has framed quantum’s near-term acceleration as an HPC problem, building facilities like the NVIDIA Accelerated Quantum Research Center that physically co-locate quantum hardware with GPU supercomputing for decoding, simulation, and control.

Microsecond-Feedback Loops for Error Correction

On the control side, Quantum Machines described its NVQLink integration for microsecond-latency quantum-classical feedback, an important detail because error correction is only as useful as the speed of the classical loop that interprets measurements and applies fixes.

Wiring, Heat, and the Physical Ceiling on Scale

The sheer physical scale of this challenge is visible in high-density superconducting chip designs that require upwards of 40,000 wires to manage control lines and heat load.

A familiar pattern appears across computing history: Performance jumps when a new accelerator arrives, and reliability jumps when the tooling around it becomes routine.

Scaling Quantum Infrastructure: Strategic Partnerships and Photonic Systems

Proof it’s Real: Partnerships Turning Quantum into Infrastructure

Denmark’s push to physically host next-generation systems is backed by institutions rather than hype cycles. The Novo Nordisk Foundation and EIFO described QuNorth and Magne as an €80 million quantum computer procurement and buildout plan tied to a logical-qubit milestone target.

Partnerships are also becoming commercialization pipelines. IonQ and Cambridge announced an Innovation Center focused on quantum technology commercialization, while Florida Atlantic University outlined a plan to host an on-site D-Wave Advantage2 system as a regional education and applied research asset.

Mini Proof: Quantum Signaling Milestones

The engineering validation of these systems is best seen in the recent surge of technical preprints and conference records. These documents provide the specific implementation notes and loss budgets that prove we have moved past theoretical models.

- A technical preprint of the single-atom result details device-independent quantum key distribution over 100 km with implementation notes and rates.

- A repeater-focused preprint frames memory-to-memory entanglement over 10 km as the prerequisite for scaling beyond two nodes.

- A field-program perspective highlights microwave-to-optical conversion with extremely low loss as the bridge between QPU modalities and fiber.

- A conference record describes a nationwide heterogeneous quantum network connecting metro networks into long-haul trials.

These technical achievements reinforce that institutional investment is tracking real engineering progress. They show that the distance is measurable, the loss budgets are trackable, and real endpoints are being connected in ways that mirror the growth of the early internet.

Photon Highways: Interconnects and Modularity

The future of modularity depends on the “photon highways” currently being built. These optical interconnects solve the critical problem of moving quantum data between physical modules:

- Low-Loss Modular Interconnects: Recent fiber engineering collaborations are tackling the practical hurdle of moving information between processors with minimal signal loss.

- Datacenter Scaling Pressure: The same physics reshaping photonic datacenter networking is proving that power and bandwidth limits will inevitably force a shift from electricity to light.

- Efficiency at the Source: Scale relies on on-chip single-photon generation, where molecular chips are now producing the stable light particles required for stable computation.

- Energy-Efficient Compute: New photonic chips designed for AI acceleration illustrate how light-based hardware can cut the massive energy cost of massive data transfers.

What this Enables Without the Hype

The practical impact of these breakthroughs is already visible in the way we handle data and secure our infrastructure. Instead of waiting for a single “breakthrough day,” the following shifts are hardening the digital world right now:

- Post-Quantum Standard Migration: The ML-KEM Key-Encapsulation Standard (FIPS 203) has formalized how we protect data from future quantum machines, turning abstract threat modeling into active deployment.

- Hardening Everyday Browsing: Hybrid TLS handshakes are quietly securing web sessions by combining classical and post-quantum layers in the background of your browser.

- Practical Testing and Inventory: Engineering workflows are shifting toward post-quantum testing and integration comparisons that prioritize finding compatibility breaks before the migration begins.

- Industrial Scientific Computing: From materials discovery to drug simulation, open-source AI infrastructure for manufacturing is proving that shared datasets and digital twins can turn research into repeatable, industrial engineering.

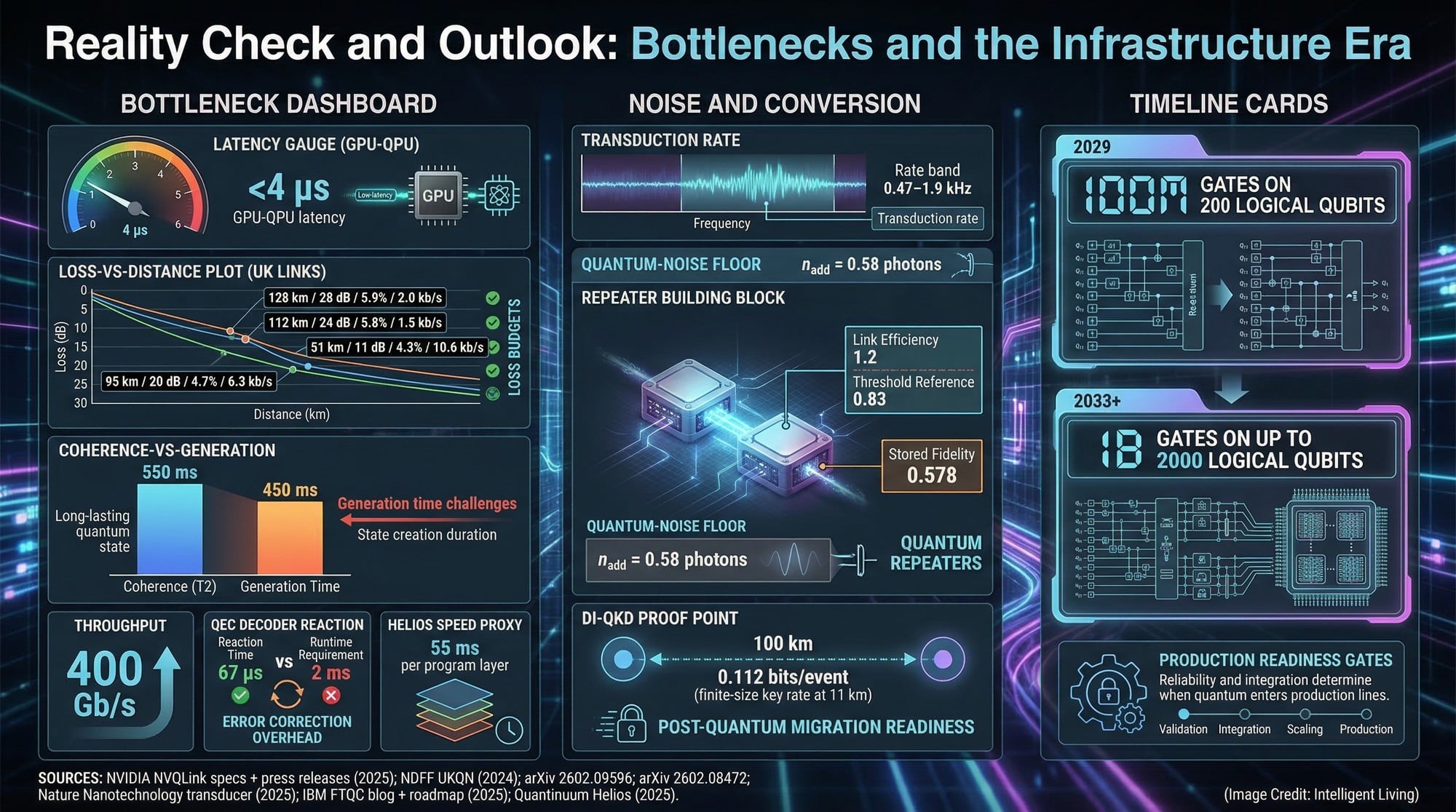

Reality Check and Outlook: Bottlenecks and the Infrastructure Era

Reality Check: Bottlenecks Still Decide the Timeline

The overhead for error correction is still massive, and networking rates need to jump by orders of magnitude. We are staring at years of complex fabrication and scaling challenges—this is a long industrial grind, not a sudden scientific flash.

Immediate value is arriving through advanced quantum sensing applications. These tools turn fragile quantum states into hyper-precise measurements today, while the path to general-purpose compute continues its steady arc.

One everyday analogy fits: The early internet did not become useful because a single router got faster. It became useful because protocols stabilized, infrastructure spread, and reliability improved enough to support ordinary life.

The Quantum Future: Bridging Theory and Global Infrastructure

What we are witnessing is the fundamental reimagining of the computer itself. We are moving away from the isolated quantum box and toward a world where quantum effects are woven directly into the global compute stack. This convergence—where networking, error correction, and GPU control all meet—is what finally moves the needle for industries like pharmacology and materials science. It’s about repeatability. It’s about the quiet confidence that comes when the hardware finally behaves like an industrial tool rather than a fragile prototype.

Quantum’s ultimate victory won’t be a front-page headline; it will be a quiet, invisible integration into our daily lives. Think of the moment a logistics provider finds a perfect route or a lab identifies a life-saving molecule without ever realizing a quantum engine powered the search. That is when we will know the infrastructure has truly taken hold. The infrastructure is being laid right now, mile by fiber-optic mile. The breakthrough isn’t a future event. It’s a current reality—a quiet, powerful integration that is weaving itself into the world’s compute fabric right now.

Essential Guide to 2026 Quantum Infrastructure and Logical Qubits

What Defines a Logical Qubit in Modern Systems?

A logical qubit is essentially a “shielded” unit of information. Instead of relying on a single physical qubit that might fail, we group many of them together using error-correction codes to create one stable, reliable unit that can actually finish a computation.

Why is GPU Integration Necessary for Quantum Computing?

Quantum processors are incredibly fast but also incredibly messy. They need the massive parallel processing power of GPUs to handle the “dirty work” of decoding errors and managing control loops in real-time. Without GPUs, the quantum hardware would just be shouting into the void.

How Does Quantum Signaling Improve Networking?

It changes the game for security. Quantum signaling allows us to share entanglement across distances, creating communication links that are protected by the laws of physics. If someone tries to eavesdrop, the quantum state collapses, and we know immediately.

What is the Role of Post-Quantum Cryptography?

Think of it as future-proofing. Large quantum computers could eventually crack current encryption methods. Organizations are already transitioning using finalized post-quantum encryption standards, which rely on mathematical puzzles designed to resist quantum attacks.

Why Is Infrastructure Convergence Happening Now?

Because the “science project” phase has hit a ceiling. To go further, we had to stop treating quantum as a separate field and start treating it as a part of high-performance computing. The hardware, software, and networking are finally maturing at the same time.