Artificial intelligence is often described as the engine of the next industrial leap. But in materials science, the real breakthrough is not a single algorithm or record-setting model. It is the rise of open-source AI infrastructure that connects data, experiments, simulations, and manufacturing into one coherent system. A recent open-access review in Communications Materials outlines how an end-to-end AI-powered infrastructure can move materials from conceptual design to commercialization while remaining transparent, scalable, and environmentally responsible.

For decades, materials discovery has been limited not by imagination, but by coordination. Chemists, physicists, engineers, and manufacturers often work in isolated silos. Data sits in incompatible formats. Promising simulation results cannot be reproduced. Laboratory breakthroughs struggle to translate into scalable production. Open-source AI infrastructure aims to fix these gaps by creating a shared, interoperable foundation that allows models, datasets, workflows, and manufacturing systems to communicate with one another.

The next acceleration in batteries, semiconductors, structural alloys, and sustainable building materials may depend less on a single breakthrough compound and more on the operating system that connects discovery to deployment, especially as solid-state battery milestones from 2025 to 2026 highlight how infrastructure choices determine which chemistries actually reach commercial vehicles.

The Evolution of Open-Source AI Infrastructure in Materials Science

- The 2026 Communications Materials review defines an end-to-end AI-driven infrastructure spanning conceptualization through commercialization, with explicit focus on sustainability and transparency.

- Cloud-scale screening examples cited in the review involve the utilization of 1,000 virtual machines to evaluate over 32 million candidate materials simultaneously, particularly as wafer-scale AI systems for scientific inference push high-performance computation directly to the data source.

- Applying FAIR data principles—first formalized in Scientific Data—ensures that research outputs and their associated tools remain Findable, Accessible, Interoperable, and Reusable.

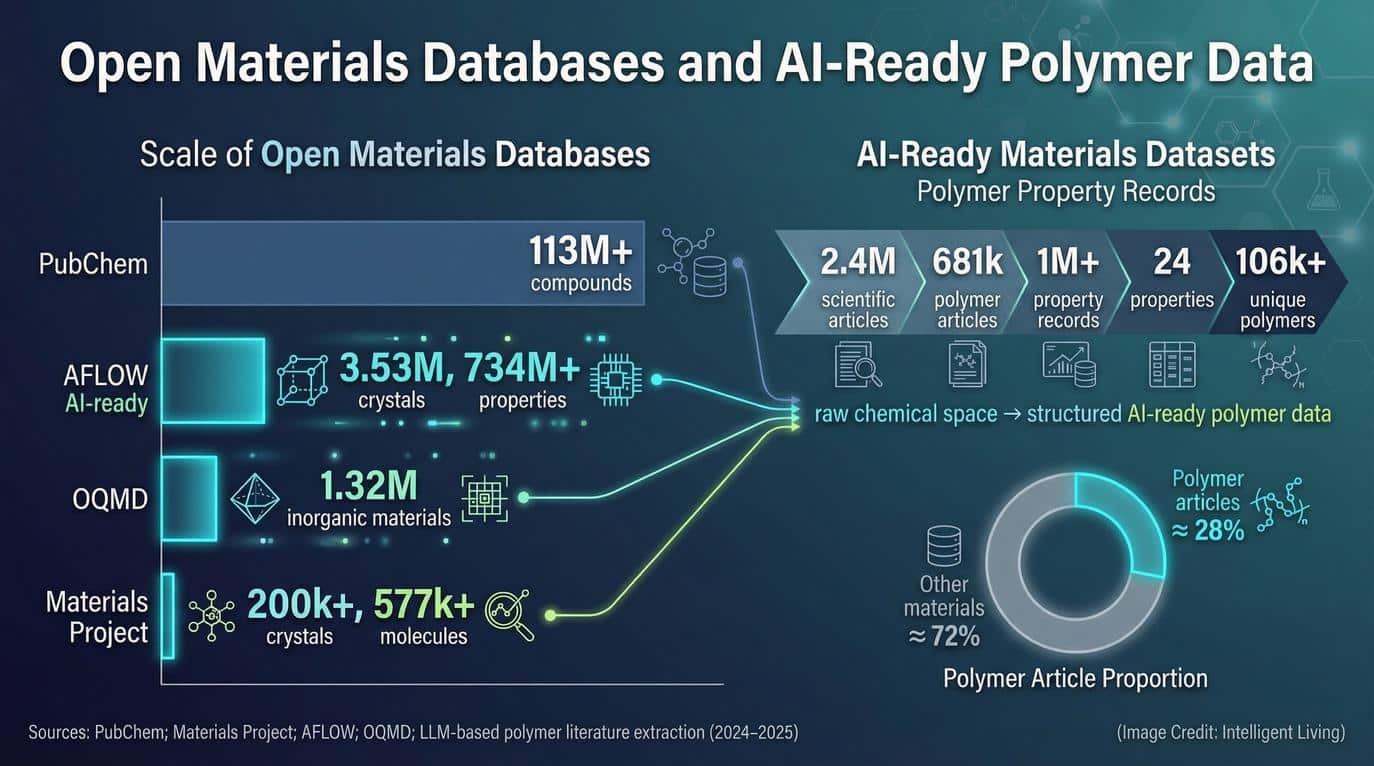

- Matbench, a benchmark suite for materials machine learning, includes 13 tasks with datasets ranging from hundreds to over 100,000 samples to enable standardized comparison of models.

- Autonomous materials optimization via closed-loop experimental platforms has been demonstrated through self-driving laboratory systems, which effectively eliminate traditional manual trial-and-error cycles. Similar automation is now transforming analytical quality control in semiconductor fabs through ICP-MS-based process control.

The Bottleneck Isn’t Ideas, It’s Infrastructure

Abundant opportunities for new material compounds exist, yet the discovery process frequently stalls during the critical transition from simulation to industrial scale. The Communications Materials review argues that the real limitation is infrastructure. Without unified systems for data acquisition, model training, workflow tracking, and deployment, each research group effectively rebuilds the same pipeline from scratch.

Machine learning and generative AI are reshaping material science by ensuring that AI’s impact is grounded in a high-quality data ecosystem. Widespread systemic fragmentation slows innovation and significantly complicates the independent verification of research results.

Practical Verification and Reproduction Challenges

In practical terms, infrastructure determines whether a materials discovery can be reproduced, stress-tested, and eventually manufactured. If experimental data is not stored in standardized formats, it cannot be reused by machine learning systems. If workflows are not tracked with full provenance, other teams cannot confirm the steps that led to a reported breakthrough. If manufacturing simulations are disconnected from discovery models, scaling a promising material becomes guesswork.

Infrastructure transforms scattered innovation into cumulative progress by providing the necessary structural substrate for collaboration.

What “Open-Source AI Infrastructure” Really Means in Materials Discovery

Defining open-source AI infrastructure requires a focus on functional connectivity rather than abstract software concepts. In the context of materials science, the infrastructure functions as a layered system that facilitates the interaction between several critical pillars:

- Data Repositories: Secured storage environments for raw and processed experimental results.

- Application Programming Interfaces (APIs): The protocols that allow different software modules to communicate.

- Machine learning Models: Predictive algorithms trained on curated materials datasets.

- Workflow Engines: Systems that automate and track the sequence of computational or physical tasks.

- Feedback Loops: Real-time mechanisms that integrate experimental or manufacturing data back into the discovery cycle.

Interoperability and Cloud Scale Computational Screening

Deploying a multi-layered architecture ensures that the materials innovation pipeline achieves total interoperability rather than relying on isolated tools. The 2026 review describes this as an end-to-end stack that integrates data acquisition, high-fidelity computation, self-driving laboratories, secure sharing mechanisms, and cloud–edge computing architectures. On the hardware side, photonic chips for data-center networking and compute illustrate how physical infrastructure is also being redesigned to support this stack.

Massive screening capacity illustrates how modern cloud infrastructure evaluates vast design spaces that are impossible to explore through manual methods. Microsoft Azure virtual machines—scaling up to 1,000 units—were used to screen more than 32 million material candidates in a primary architectural example mentioned in the review.

Open-source in this context means that core software frameworks, data schemas, and workflow tools are publicly accessible. This lowers barriers for universities, startups, and researchers in emerging economies to participate. It also enables peer inspection of methods, which is essential for scientific credibility.

Prioritizing sustainability and lifecycle awareness forms a core pillar of the 2026 review’s infrastructure design principles. Infrastructure decisions affect energy consumption and carbon footprint, particularly when training large models or running high-performance simulations. Cloud–edge strategies, where computation is distributed between centralized data centers and local systems, are discussed as part of reducing latency and potentially improving efficiency. Infrastructure and edge AI deployments for smart cities show that architectural choices increasingly shape environmental outcomes.

The Trust Stack: FAIR Data, Provenance, and Benchmarks

Acceleration without trust can create confusion instead of progress. In materials discovery, trust is built through three pillars: FAIR data standards, workflow provenance, and standardized benchmarks.

Established in Scientific Data, the FAIR principles provide a rigorous framework for defining research outputs. These standards apply not only to raw datasets but also to the software tools and workflows that generate them:

- Findable: Data must be easily located by both humans and computer systems.

- Accessible: Protocols must be in place for users to retrieve the data securely.

- Interoperable: Information should be formatted to integrate with other datasets and tools.

- Reusable: Documentation and provenance must be sufficient for future research cycles.

Provenance Tracking and Collaborative Workflow Platforms

Rigorous data standardization enables AI systems to autonomously combine results from diverse studies, effectively accelerating the overall research timeline. Workflow provenance adds another layer.

Platforms such as AiiDA are designed to automatically record the full history of computational processes, creating a traceable graph of inputs, parameters, and outputs. AiiDA has been described in peer-reviewed literature as capable of managing tens of thousands of processes per hour while preserving detailed provenance records. This means that when a model predicts a new compound, other researchers can inspect exactly how that prediction was generated.

Materials Cloud extends this philosophy by enabling researchers to share not just data files but also complete workflows and provenance graphs. Moving from sharing static results to sharing active processes significantly increases scientific reproducibility across the materials community.

Benchmarks complete the trust stack. Matbench provides 13 standardized tasks covering datasets that range from a few hundred to over 100,000 samples. By evaluating models on the same tasks, researchers can compare performance in a transparent way. This prevents inflated claims and helps the community understand where models truly excel or struggle.

Combining FAIR standards with provenance tracking and rigorous benchmarking converts AI materials discovery into a measurable, auditable field.

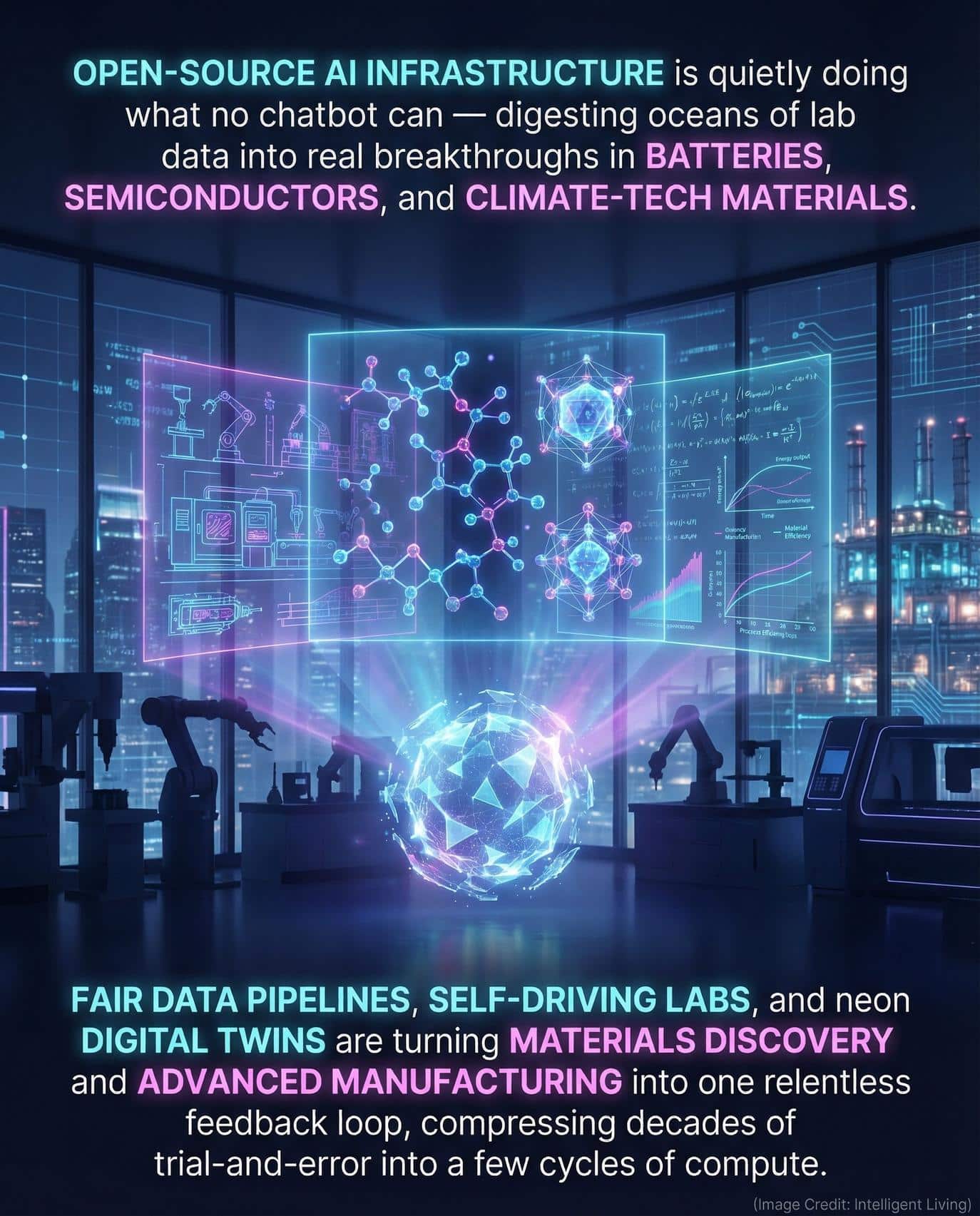

The Open Data Substrate: Materials Project, NOMAD, and APIs that Make AI Usable

At the base of the stack sits data. Without large, high-quality datasets, machine learning systems cannot identify meaningful patterns.

Developers can programmatically query the Materials API to integrate specific materials properties directly into their own custom tools and workflows. This access is facilitated by the Materials Project, which provides open access to computed information on thousands of compounds via cloud infrastructure like the AWS Open Data Registry.

Programmatic Data Access and Collaborative Ecosystems

Establishing programmatic access is critical because APIs allow machine learning models to retrieve structured data automatically without the need for manual downloads. Decisions regarding sustainable building materials increasingly depend on evaluating how high-performance properties influence specific climate outcomes at scale. Open data access makes it easier to evaluate trade-offs at scale.

NOMAD provides a complementary approach by focusing on FAIR data management and providing specialized open-source tooling for the research community. Its AI toolkit enables interactive analysis of materials data directly in a web-based environment, combining archived datasets with user-provided inputs. This reduces friction between data storage and AI experimentation.

Interconnecting open databases, APIs, and AI toolkits reduces the dependence on individual laboratories and shifts the focus toward shared, resilient infrastructure. That shift transforms materials science from a series of isolated breakthroughs into a continuously improving, collaborative system.

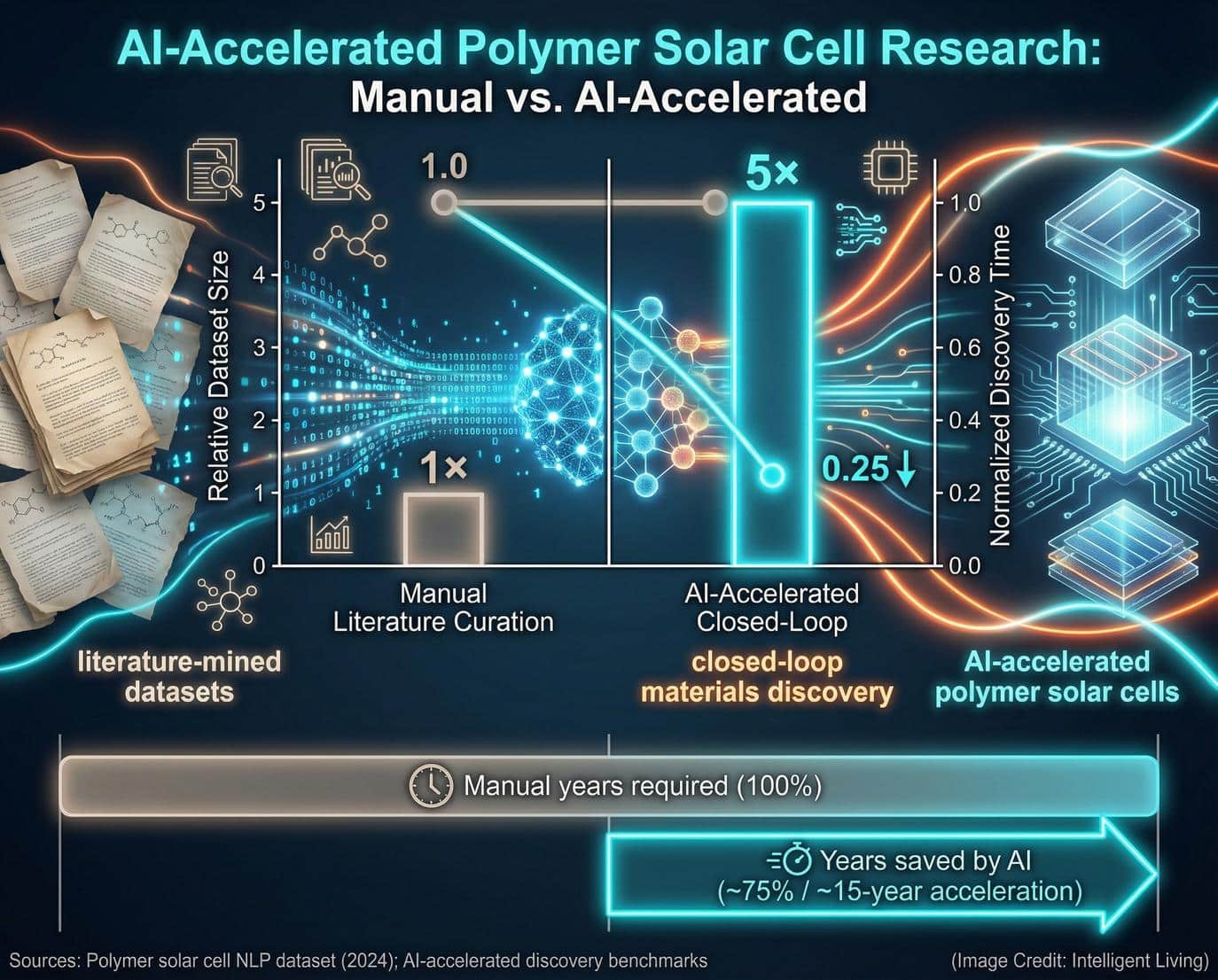

Self-Driving Labs: Turning Discovery Into a 24/7 Feedback Loop

Open data and interoperable software lay the groundwork, but true acceleration emerges when discovery becomes continuous. Self-driving laboratories combine robotics, machine learning, and automated analysis to create closed-loop experimentation systems that operate with minimal human intervention.

Autonomous Optimization and Distributed Frameworks

Implementing a 24/7 feedback loop fundamentally transforms the scalability of materials research. Algorithms within the system determine the next most informative experiment autonomously, replacing the traditional need for researchers to manually adjust parameters and wait days for physical feedback. This approach was highlighted in a widely cited demonstration in Science Advances, which detailed a modular robotic platform capable of optimizing thin-film materials iteratively.

More recently, a distributed, cloud-based design-make-test-analyze framework reported the discovery of 21 new gain materials by coordinating asynchronous experimentation across multiple laboratories. This approach demonstrates that infrastructure can extend beyond a single physical lab. When connected through shared computational systems, geographically separated facilities can function as one integrated discovery engine.

This compounding loop compresses development cycles by automating the research flow:

- Data Integration: Results from individual experiments feed immediately into predictive models.

- Model Refinement: Algorithms analyze new data to refine and focus future predictions.

- Autonomous Launching: New experiments initiate without the need for manual interpretation or delays.

Over time, these loops transform materials science into a continuously improving engine. Closed-loop principles now scale manufacturing output through digital twin-driven strategies that increase productivity without requiring linear headcount growth.

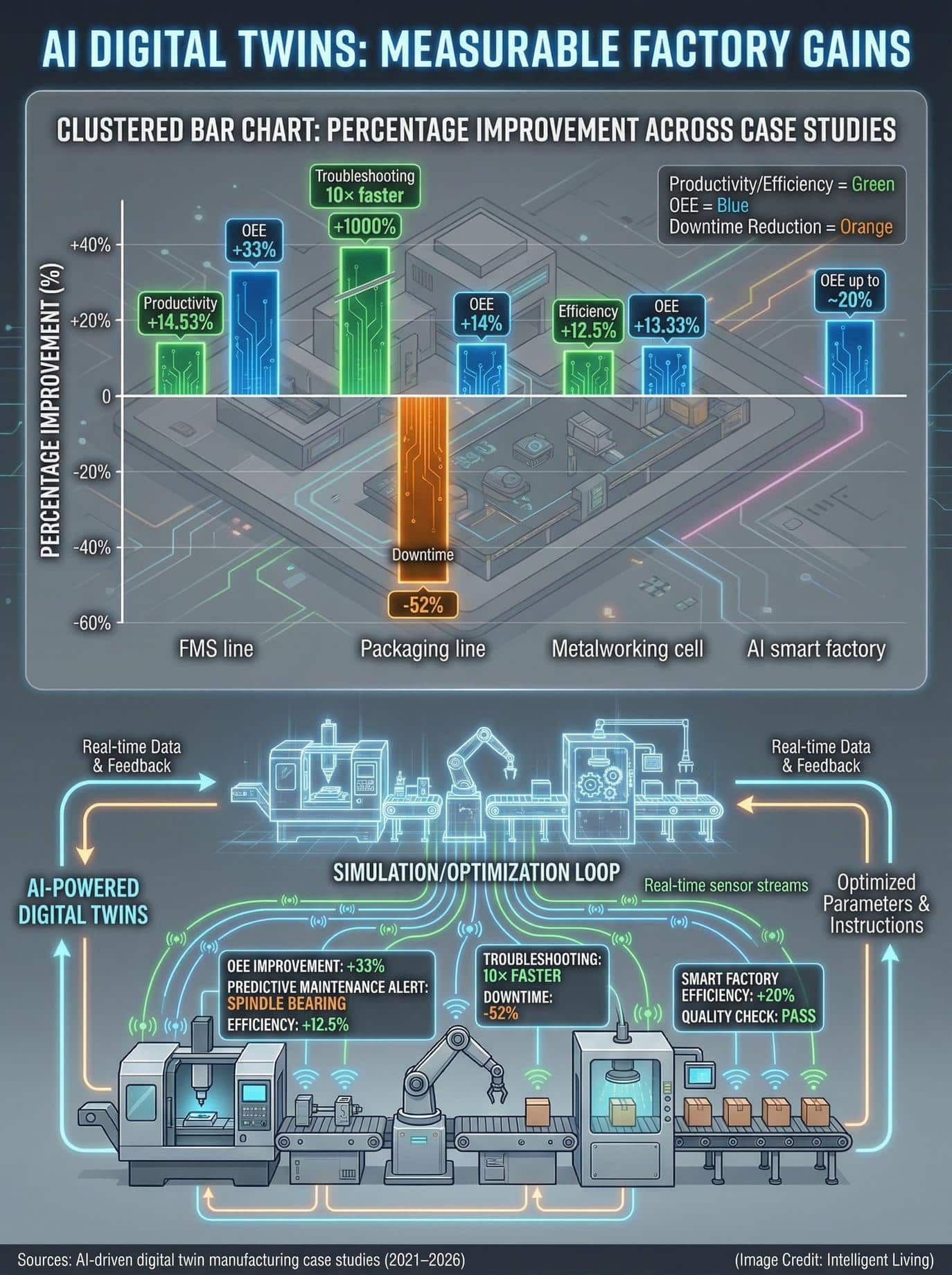

From Lab to Factory: Digital Twins Translate Candidates Into Manufacturable Process Windows

Identifying a promising compound marks only the first stage of discovery; the subsequent challenge involves ensuring the material can be manufactured consistently and safely at scale. This is where digital twins enter the infrastructure stack.

A digital twin is a high-fidelity virtual representation of a physical system. In manufacturing, digital twins integrate sensor data, simulation models, and control systems to predict how materials and processes behave in real time. A 2025 review on AI-driven digital twins describes how these systems enable predictive analytics, real-time monitoring, and autonomous decision-making within industrial environments.

Predictive Analytics for Industrial Feasibility

For materials discovery, this means that once a candidate compound shows promise in simulation or lab testing, engineers can model how it will respond to temperature gradients, pressure changes, mechanical stress, or contamination risks during production. Instead of discovering manufacturability problems late in the process, digital twins expose constraints early.

Digital manufacturing platforms are becoming core infrastructure for modern industry. When digital twins are connected to AI discovery systems, the pipeline from theoretical candidate to factory-ready process becomes shorter and more predictable. AI-driven factory digital twins allow entire production lines to be optimized virtually before hardware installation begins.

Linking discovery models to production simulations reduces waste, improves quality control, and strengthens the economic feasibility of new compounds. A material that performs well in theory but fails under industrial constraints is not a breakthrough. Infrastructure that links discovery models to production simulations ensures that performance claims are grounded in manufacturing reality.

Sustainability and Compute: The Hidden Cost of Acceleration and The Mitigation Playbook

Large-scale screening requires the kind of high-bandwidth-memory data center architectures that consume significant electricity, meaning that computational acceleration carries unavoidable environmental implications.

The Communications Materials review explicitly addresses sustainability as part of infrastructure design, highlighting the need to consider lifecycle assessment, techno-economic analysis, and the environmental footprint of AI computation. Infrastructure decisions shape not only speed but also carbon intensity, and packaging approaches such as CoWoS chiplet-based AI accelerators show how choices at the hardware level influence both energy draw and critical mineral use.

Mitigation Strategies for Energy-Intensive AI Simulations

Distributing computation between centralized data centers and localized edge systems allows workloads to be optimized for latency, energy efficiency, and resource utilization. This cloud-edge architecture offers a critical mitigation strategy, as infrastructure and edge AI deployments for smart cities show that architectural choices increasingly determine the total environmental impact.

Prioritizing reproducibility and benchmarking offers a critical mitigation strategy that effectively decreases redundant experimentation and wasted computation. Fewer wasted cycles mean less unnecessary computation. In this sense, the trust stack and sustainability strategy reinforce one another.

Acceleration is valuable only if it aligns with long-term environmental responsibility. Open infrastructure makes that alignment measurable rather than aspirational.

Traceability and Industry 5.0 as The Deployment Compass

Critical transparency becomes essential as materials transition from the laboratory discovery phase into commercial supply chains. Traceability systems document where inputs originate, how they are processed, and how final products are assembled.

Research from the National Institute of Standards and Technology examines how distributed ledger technologies support supply chain traceability by exchanging structured records among stakeholders. While blockchain is not a universal solution, the broader principle is clear. Infrastructure must support verifiable records if advanced materials are to meet regulatory, safety, and sustainability standards.

Aligning Innovation with the Industry 5.0 Resiliency Framework

Resilient industrial models align perfectly with the European Commission’s Industry 5.0 framework, which prioritizes sustainability and societal value. Industry 5.0 shifts the focus from pure efficiency toward societal value, environmental responsibility, and long-term resilience. Circular battery supply chains are already applying this logic by using digital product passports and recycling ecosystems to keep critical materials in circulation, while geospatial monitoring and verification systems tie satellite observations directly to supply chain performance.

Open-source AI infrastructure supports the Industry 5.0 vision directly by embedding transparency, auditability, and collaboration into every stage of the discovery pipeline. Such structural accountability ensures that material innovation is not only accelerated but also remains verifiable throughout its lifecycle.

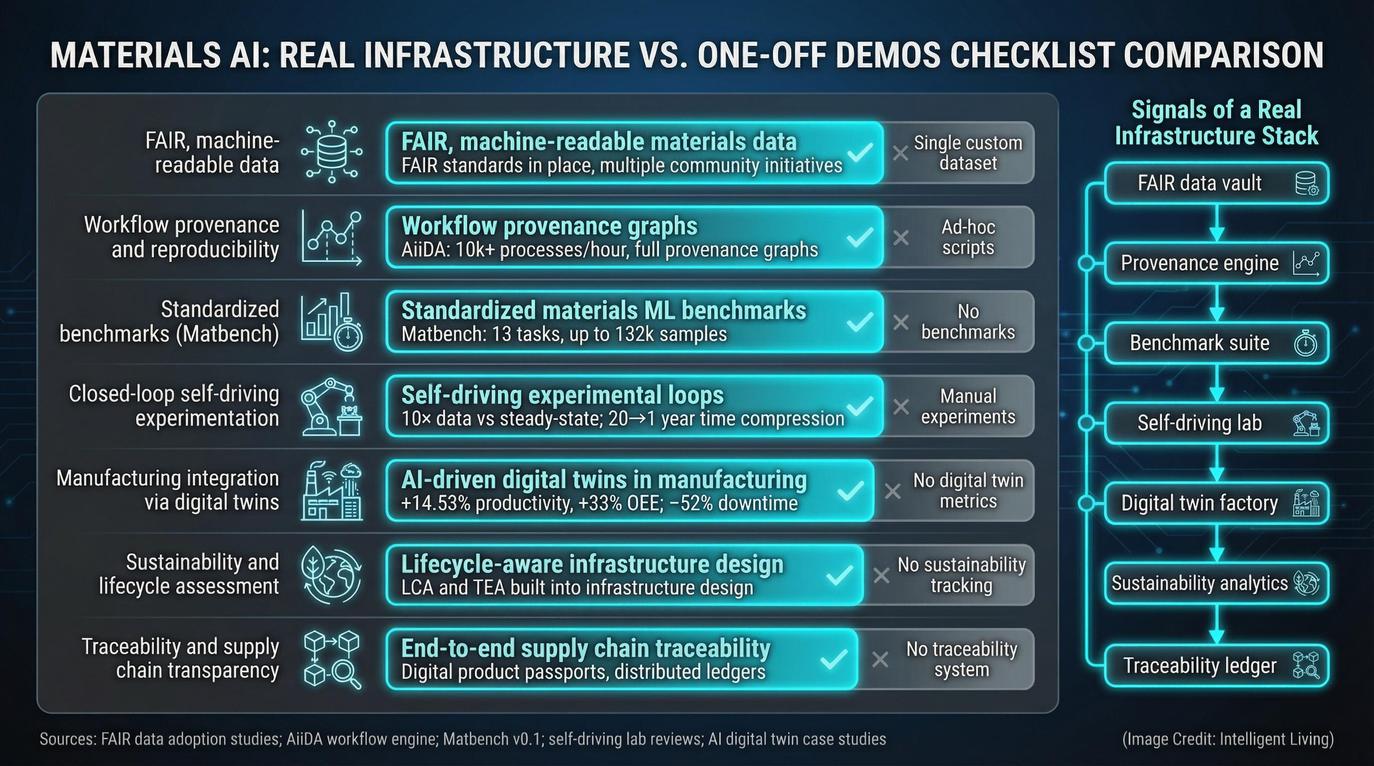

A Practical Checklist: How to Tell Real Infrastructure from a One-Off Demo

For readers evaluating claims about AI-powered materials discovery, the following questions can help distinguish genuine infrastructure from isolated demonstrations:

- Is the underlying data FAIR and machine-readable, following established standards?

- Are workflows tracked with full provenance, allowing independent reproduction of results?

- Have models been tested against standardized benchmarks such as Matbench?

- Is there a closed-loop experimental component that connects predictions to real-world validation?

- Does the system integrate with manufacturing simulations or digital twins and advanced metrology solutions in smart factories?

- Are sustainability and lifecycle impacts considered as part of the design?

- Is traceability addressed where supply chain complexity demands it?

If the answer to most of these questions is yes, the claim likely reflects infrastructure-level progress. If not, it may represent a promising but isolated proof of concept.

Scaling Materials Science Innovation with Open-Source AI Infrastructure

Transitioning to an open-source AI infrastructure represents a fundamental paradigm shift for the global manufacturing community. By integrating FAIR data standards with digital twin technology and autonomous feedback loops, the field is evolving from a collection of fragmented breakthroughs into a truly coordinated innovation ecosystem. This structural evolution ensures that every experiment contributes to a cumulative knowledge base, allowing progress to compound across the entire materials science innovation pipeline.

In the landscape of advanced manufacturing, speed is only a partial victory; true success is measured by how effectively new materials can be trusted, scaled, and sustained. Open-source infrastructure provides the necessary accountability layer, linking theoretical performance directly to manufacturing reality. As we move toward the human-centric goals of Industry 5.0, these collaborative frameworks ensure that the next generation of materials is not only developed faster but is also more resilient and environmentally responsible.

Common Inquiries Regarding AI-Powered Materials Discovery

What distinguishes open-source AI infrastructure from traditional research tools?

Unlike isolated software, this infrastructure creates a layered, interoperable stack that connects data repositories, APIs, machine learning models, and manufacturing feedback loops into a single, cohesive system.

Why are FAIR data principles essential for materials science innovation?

FAIR standards ensure that research outputs are machine-readable and standardized, allowing AI systems to find, access, and reuse data across different studies and institutions without manual reformatting.

How do self-driving laboratories accelerate the materials innovation pipeline?

Self-driving labs utilize automated robotics and closed-loop machine learning to optimize materials 24/7. These systems analyze experimental results in real time and immediately launch the next most informative test without human intervention.

What role do digital twins play in the transition from lab to factory?

Digital twins provide high-fidelity virtual representations of production environments, allowing engineers to simulate how new materials will respond to industrial stresses before committing to expensive, large-scale manufacturing.

How does this infrastructure manage the environmental impact of AI computation?

Cloud–edge architectures and standardized benchmarks help reduce redundant processing and optimize workloads for energy efficiency, ensuring that acceleration aligns with long-term sustainability goals.