The volume of visual content required for a modern multi-channel campaign has shifted from a manageable stream to a relentless flood. A single product launch no longer requires just a handful of high-res hero shots; it demands dozens of iterations for social media stories, programmatic display banners, landing page backgrounds, and video thumbnails. Each of these must maintain a cohesive visual identity while adhering to the specific technical constraints of its platform.

In the past, this was a manual bottleneck. Designers would spend hours resizing, retouching, and re-exporting. Generative AI has promised to solve this, but the “prompt-and-hope” method often leads to stylistic drift—where the ad looks like it belongs to a different brand than the landing page it leads to. Solving this requires moving beyond simple generation and into a structured batch production workflow, leveraging the consistency achievable with advanced AI image engines like Nano Banana Pro.

The Geometry of Scaling Visual Assets

Scaling is not merely a matter of quantity; it is a matter of geometric consistency. When a creative team moves from a 16:9 cinematic shot to a 9:16 mobile-first format, the composition often breaks. Simple cropping usually results in losing the focal point of the image.

The challenge lies in creating “extensible” assets. Instead of generating a single static image, operators are now generating central thematic elements that can be outpainted or recomposed for different aspect ratios. This is where the underlying model architecture becomes critical. High-variance models might produce beautiful art but fail at consistency when asked to reproduce the same character or lighting setup across twenty different seeds.

Operational efficiency in this context is measured by the “reject rate.” If a team generates 100 assets but only five are usable without heavy manual intervention, the workflow is broken. The objective is to utilize a model that respects structural prompts and maintains a consistent color palette across varied resolutions.

Working With High-Fidelity Image Engines

The selection of a generation engine dictates the ceiling of the final output. Engines like Nano Banana Pro, available within the Kimg AI ecosystem, are engineered for projects requiring high-fidelity detail and strong prompt adherence, where a quick, low-resolution result would not be sufficient.

In a batch production environment, the process usually begins with a core aesthetic prompt. This prompt establishes the lighting, the texture, and the overall mood of the brand. By using a high-fidelity engine like Nano Banana Pro, a creator can lock in these parameters. The model’s ability to handle complex textures and lighting environments makes it suitable for professional-grade assets that don’t immediately appear AI-generated.

However, it is important to note a limitation: no AI model, including this one, can perfectly interpret every nuance of a brand’s physical product from a text prompt alone. When marketing a specific, highly detailed physical object, operators will likely still need to use image-to-image workflows or specific reference layers to ensure the product itself is represented accurately. The AI excels at the environment, the atmosphere, and the secondary elements that fill the frame.

Refining and Post-Processing AI-Generated Assets

Beyond the initial generation, advanced AI tools like Nano Banana Pro extend into the refinement phase. In a batch workflow, the first generation is rarely the final asset. Teams often need to remove backgrounds, upscale for large-format display, or use inpainting to fix minor artifacts.

A typical pipeline looks like this:

- Base Generation: Use highly descriptive prompts to create a library of raw assets.

- Selection and Upscaling: Move the most successful generations into an AI-powered upscaling tool. This is vital for landing pages where low-resolution artifacts are highly visible to users on retina displays.

- Consistency Checks: Ensuring that the AI output maintains the same contrast levels across the entire batch.

The utility of these tools is found in their speed. Being able to toggle between different models — from a standard generation to a more cinematic render — allows a creative team to test multiple creative directions in the time it used to take to set up a single photo shoot.

Strategies for Consistency Across Channels

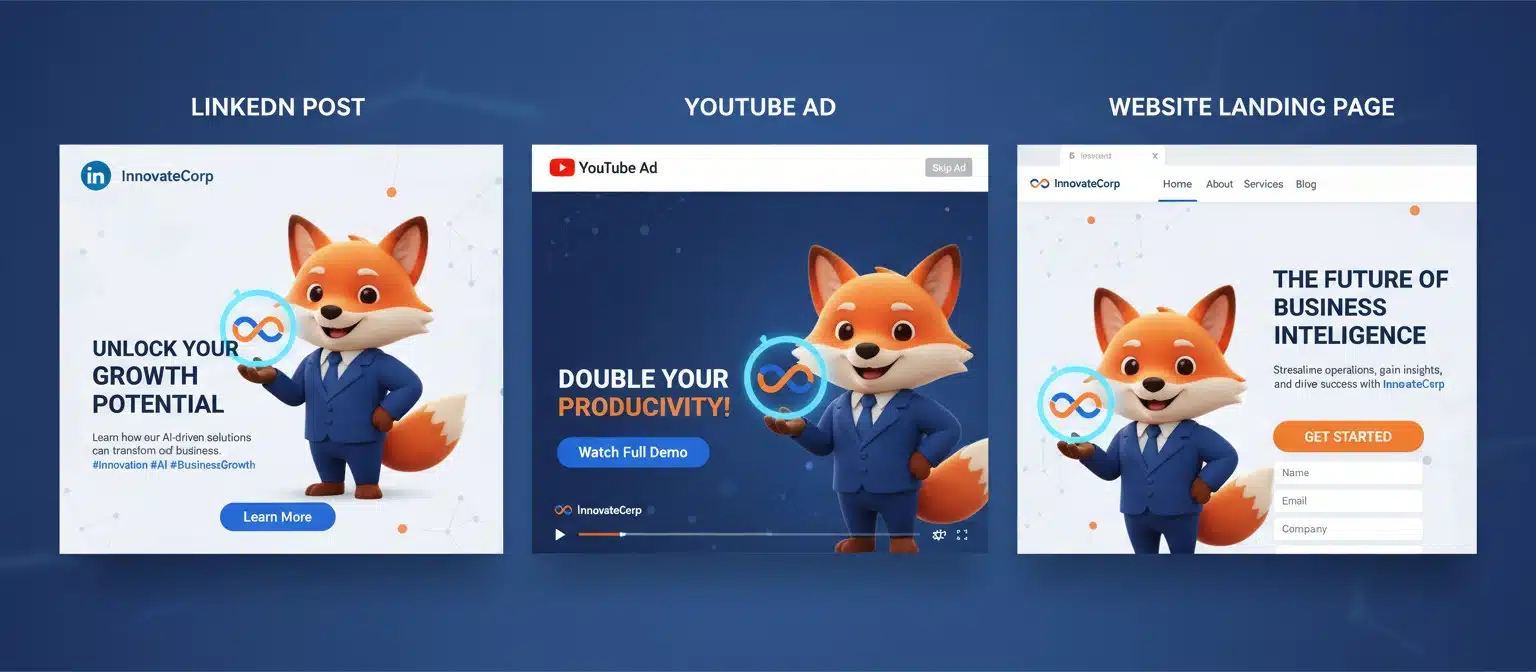

To keep a LinkedIn post, a YouTube ad, and a landing page looking like they are part of the same ecosystem, operators should focus on “Style Seeding.” This involves taking a successful generation and using it as a reference for subsequent generations.

The Role of Negative Prompting

In batch production, avoiding undesirable elements is just as important as including desired ones. Negative prompts are essential for removing common AI hallucinations like distorted text, unnatural limbs, or busy backgrounds that distract from the call to action. By standardizing a set of negative prompts across all campaign assets, teams ensure a uniform level of “cleanliness” in the visual output.

Managing Aspect Ratios

When generating for ads, the aspect ratio dictates the composition. A 1:1 square image for Instagram requires a central focal point, whereas a 21:9 banner for a website header needs “breathing room” on the sides for text overlays. One advantage of modern AI image platforms is the ability to select these ratios before generation, allowing the model to compose the scene with the final frame in mind rather than forcing a crop later.

Limitations and the Reality of Automated Assets

It is a common misconception that AI can entirely replace the human eye in creative operations. There is a visible threshold where automated batching can become “uncanny” or repetitive if not monitored.

For instance, AI often struggles with exact typography within the image. If an ad requires specific brand fonts, it is almost always better to generate the background and central subjects using the AI, and then layer the text manually in a post-production tool. Expecting the AI to render a specific brand logo perfectly is currently an exercise in frustration. The goal is to use the tool for the 80% of the heavy lifting—lighting, background, and mood—and leave the final 20% of brand-specific detail to human editors.

Additionally, while advanced AI engines are highly capable, extremely complex scenes with multiple interacting subjects can still lead to “concept bleeding,” where colors or features from one subject leak into another. Keeping prompts focused and singular is usually the best path toward a high success rate in batching.

Integrating Motion: From Static to Video

A campaign is rarely just static images anymore. The transition from a static asset to a video ad is where many teams lose time. By using the static assets generated in the previous steps and feeding them into the Banana AI video generation tools, teams can maintain a visual thread from a static banner to a moving video ad.

This “Image-to-Video” workflow is significantly more efficient than trying to prompt a video from scratch. When the AI has a high-quality, high-resolution source image to work from, the resulting motion is more predictable and the lighting remains consistent with the rest of the campaign. This is particularly useful for social media “stop-motion” style ads or subtle cinemagraphs for landing page backgrounds.

Technical Implementation: Upscaling and Resource Management

From an operational standpoint, resource management is a reality of using these tools at scale. Batching 500 images for a large-scale A/B test requires an understanding of the “cost per successful asset.” Many platforms offer free tiers suitable for testing prompts and small-scale work, but for high-volume production, paid plans typically provide access to advanced AI upscaling and faster rendering times.

The 1024×1024 resolution is often the baseline for social media, but for hero sections on a website, high-resolution upscaling is essential. Modern AI upscalers don’t just stretch pixels; they reimagine details, which is crucial when taking a 1:1 generation and making it look crisp on a 27-inch monitor.

Conclusion: The Operator’s Mindset

The shift toward AI-assisted batch production isn’t about replacing creativity; it’s about removing the mechanical friction that prevents creative teams from iterating. Using AI image generation tools allows for a level of experimentation that was previously cost-prohibitive.

Success in this new landscape depends on the operator’s ability to treat the AI as a high-speed production assistant. By defining the style, setting the constraints through negative prompts, and utilizing high-fidelity engines for the heavy lifting, teams can produce consistent, high-impact assets at a scale that matches the speed of modern digital marketing. The focus should always remain on the final user experience—ensuring that no matter where the customer sees the brand, the visual story remains unbroken.