Artificial intelligence doesn’t usually feel slow when you read the headlines, but it definitely feels slow in your daily life. You’ve likely felt that annoying pause when a chatbot thinks too long or watched an image generator hang right at the finish line. Even a camera system that seems perfect in a lab can suddenly stutter when it hits the real-world shop floor. A model can be incredibly smart, but the way it actually runs is what determines if a user stays or walks away.

Performance optimization is exactly why finding the fastest way to run PyTorch inference has become a top priority for developers. NVIDIA’s new AITune toolkit steps in to fix this specific bottleneck. Inference is simply the phase where your trained model starts doing its job—turning your inputs into useful outputs. If your inference speeds are dragging, every single feature built on top of that model is going to feel sluggish and outdated to your users.

NVIDIA AITune Overview: Why Automated PyTorch Inference Speed Optimization Matters

Key Features: High-Performance PyTorch Inference with NVIDIA AITune

AITune sits in a fast-growing category of AI tooling that focuses less on new model architecture and more on a blunt question: how do you make an existing PyTorch model respond faster on real hardware?

This toolkit simplifies the optimization process by benchmarking compatible options to pinpoint the ideal performer for your specific environment. This automation eliminates the tedious guesswork often associated with manual performance testing.

- The toolkit is open source under Apache 2.0 licensing, allowing for flexible redistribution and reuse within the developer community.

- Built for NVIDIA GPU environments on Linux.

- Works at the PyTorch nn.Module level, so it can tune a full model or specific components.

- Benchmarks multiple inference backends, then selects the fastest compatible option.

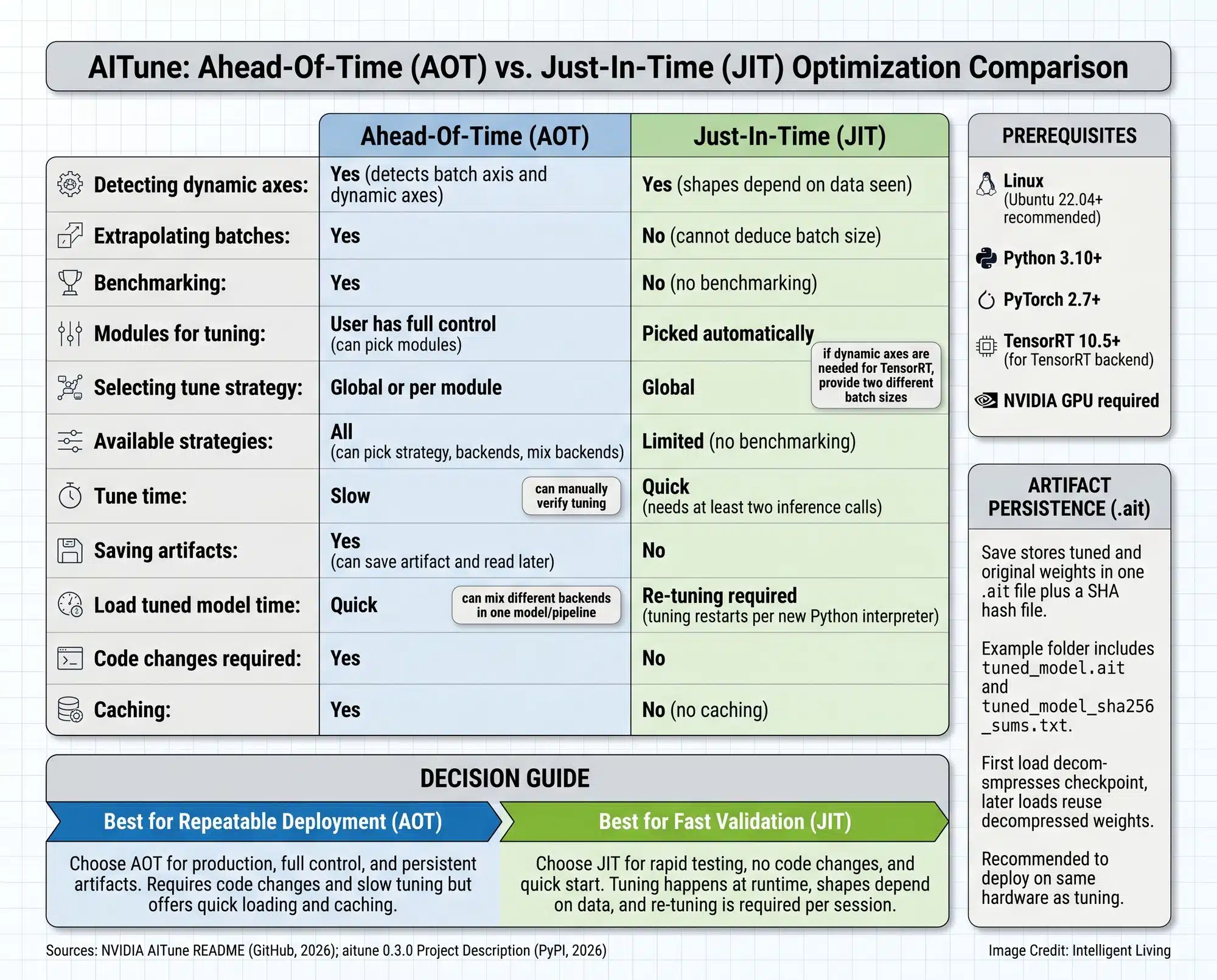

- Supports two modes: Ahead-Of-Time and Just-In-Time.

- Can validate correctness so speedups do not quietly break outputs.

- Designed to reduce the manual trial-and-error of which backend should be used.

Under the hood, the tuning process leans on measurable performance signals, especially latency (how long a single response takes) and throughput (how many responses can be served per second).

System accuracy is never sacrificed for speed. By keeping output verification integrated into the benchmarking cycle, the tool ensures your fastest model path still delivers the exact results you expect.

Prompt responses are vital in high-stakes environments. Users notice delays immediately when a voice transcription tool falls behind or a photo tagging feature hangs during a critical save operation.

What Happened: NVIDIA Released AITune and Why that Matters

Recent reports surrounding the NVIDIA AITune toolkit release highlight how this new software effectively simplifies the complex selection of inference backends for PyTorch models.

Why The Fastest Backend Changes by Model

Optimal performance is rarely universal because backends interact differently with various architectures. Minor compiler adjustments often significantly impact results, occasionally yielding unexpected performance shifts.

A familiar pattern often shows up when a model looks instant during a demo but starts hesitating once real inputs arrive. This performance shift occurs as the system moves from one-off runs to steady traffic. This behavior lines up with the reality that PyTorch compiler backend selection varies depending on which components of the model are captured during the trace.

Graph Breaks and The Slowdowns Nobody Planned For

Graph breaks trigger frequent slowdowns when the system fails to trace code into a single optimized graph, forcing a fallback to standard execution.

Identifying common graph break pressure points explains why a model might run smoothly on short inputs but struggle with more complex data. This often happens when processing long customer messages or images arriving at unpredictable sizes.

Performance Benchmarking: How AITune Identifies the Fastest PyTorch Inference Backend

Simplified Inference Optimization: Using AITune as an AI Performance Validator

AITune functions as a performance validator for model execution, testing every compatible path to ensure the system utilizes the most efficient route.

What An Inference Backend Really Means

An inference backend serves as the engine room for model execution. It determines how the math gets compiled, how kernels get scheduled, and the total overhead the system burns before any useful output reaches a user.

Your choice of backend has real-world consequences for timing and accuracy. For instance, an optimized camera system might flag a factory defect while the item is still on the conveyor belt. Without that speed, the notification could arrive half a second too late, missing the critical window entirely.

The Backends AITune Can Test

NVIDIA leverages the TensorRT high-performance inference runtime to build and execute highly optimized AI engines, providing a powerful alternative to standard PyTorch defaults.

Torch-TensorRT connects PyTorch’s compilation flow to TensorRT, and the Torch-TensorRT compile path lays out how models can be lowered into TensorRT-friendly graphs when operator coverage fits.

Teams seeking to lower their operational overhead often utilize the TorchAO framework for lower serving costs, which provides the necessary building blocks for efficient precision reduction and quantization.

How it Works

Developers accessing the AITune open-source project repository will find a streamlined Python API designed for rapid performance tuning across various pipelines and models.

Inspect, Wrap, Tune, Validate

AITune operates specifically at the nn.Module level, which is a vital distinction since most real-world AI applications are built as complex pipelines rather than single models.

A text workflow may include tokenization, a model, a post-processor, and business logic. An image generation workflow may include multiple components that behave differently under optimization. When tuning can target modules, it becomes possible to optimize the parts that dominate latency without disrupting everything else.

Correctness checks act as the guardrail. A speedup that changes outputs is not a win; it is a silent bug. In the real world, that bug looks like a customer support tool that suddenly mislabels tickets or a recommendation system that drifts into bizarre suggestions right when people expect it to be steady.

Picking A Winner without Guesswork

AITune’s goal is not to crown a universal champion. It is to measure what works best for a specific model, software stack, and GPU setup, then keep that configuration so the system stays consistent once it leaves the lab.

Production vs. Experimentation: Choosing Between AOT and JIT Tuning Modes

AOT vs. JIT: The Choice that Decides Whether this is Production or Experiment

Ahead-Of-Time (AOT) tuning offers a robust production workflow. By providing representative inputs, you can benchmark compatible backends and save the resulting configuration for consistent, repeatable model deployment.

Ahead-of-Time Tuning for Repeatable Deployment

AOT is built around deliberate inputs and repeatability. The workflow for ahead-of-time and just-in-time tuning modes describes how using a dataset or dataloader helps the system validate results while searching for speed.

This mode is easiest to understand if you imagine a team that finally gets response time under control and then refuses to lose it again. The tuned result can be carried forward so tomorrow’s deployment behaves like today’s test.

Just-in-Time Tuning for Fast Experiments

Just-In-Time tuning is the try-it-quickly path. It attempts optimization during runtime, which can be convenient when a developer needs a fast answer during early testing and wants to see which backend even has a chance of working.

The tradeoff is control. Runtime tuning can be less predictable across machines, which is why JIT fits best when the goal is learning fast, not shipping final behavior.

60-Second Reality Check: What You Need Before Trying It

Meeting the AITune hardware and software prerequisites is essential, as the tool targets NVIDIA GPU support on Linux with current versions of Python and PyTorch.

If dependency setup tends to be the part that derails a test run, using NVIDIA NGC prebuilt PyTorch containers simplifies the initial environment setup and wiring required for performance testing.

Even with strong software, hardware limits still show up in plain ways. If you are building AI tools at home, you’ll likely discover that memory capacity is your biggest hurdle. This becomes especially obvious when your chat prompts get longer or your conversation history starts to fill up the available space.

Managing KV cache growth and memory pressure is essential, as these factors often cause models to stumble on longer, more complex prompts when the GPU memory hit its limit.

Enterprise Applications: Reducing AI Inference Costs with Optimized Benchmarking

Where This Could Show Up Next

AITune’s adaptable architecture improves performance across diverse industrial use cases. An optimized inference stack ensures your applications remain responsive in both consumer and enterprise settings.

- Computer vision systems in retail or manufacturing that need faster frame processing.

- Speech recognition pipelines handling real-time transcription and call notes.

- High-volume NLP services where serving more requests per second lowers costs.

- Diffusion-style image generation workflows where different components benefit from different backends.

- Enterprise dashboards where a small latency drop changes the entire feel of the product.

- Internal tools where it works is not enough because waiting breaks the workflow.

- Industrial production pipelines incorporating optimized AI models for materials and manufacturing to accelerate delivery.

By addressing these latency bottlenecks, developers can ensure their AI tools remain competitive and cost-effective under heavy user load.

AI Infrastructure Economics: Balancing Latency, Throughput, and Power Constraints

Inference Has Two Price Tags: Latency and Throughput

Inference is the ongoing cost of AI. Training is a headline event, but inference is the daily expense, the daily latency, and the daily energy draw.

Calculating the tokens-per-watt efficiency of an AI model is crucial for determining how many answers a system can serve per unit of energy, money, and power.

Power Ceilings are Now a Design Constraint

Strict electricity limits are forcing a major shift in how data centers are built. The economic constraints of power-capped AI data centers highlight why managing heat and power consumption has become just as important as the hardware itself.

In plain terms, even a perfect speedup is only valuable if it fits inside the power envelope the hardware is allowed to draw.

Efficiency Tricks: Quantization and Memory Pressure

Low-precision methods remain a strategic choice for teams seeking significant performance gains. Applying low-bit quantization for faster AI inference enhances serving stability and significantly reduces the frequency of memory transfers.

For smaller computer setups, these efficiency tricks are a necessity. Reducing how much memory your model uses prevents frustrating crashes in the middle of a task, which is a common pain point for independent AI developers.

When One GPU is Not Enough

Distributed serving stacks are becoming their own layer of software, and the shift toward scalable multi-node inference serving solutions demonstrates how the industry is moving beyond the limits of a single machine.

AITune fits into this shift by treating inference performance as something that should be measured and selected, not guessed.

The Future of Efficient AI: Why AITune is Vital for PyTorch Developers

NVIDIA AITune shifts the focus of AI development from simple assumptions to evidence-based optimization. Instead of hoping a specific backend works, developers can now rely on real-world benchmarks to ensure their PyTorch models are hitting peak speeds on their specific hardware. This tool makes the complex task of selecting the fastest inference backend much more accessible for teams who need to ship reliable products without the traditional trial-and-error headache.

Delayed responses can render even the most advanced AI features useless. High-speed execution is not merely a technical goal; it defines the difference between a seamless user experience and a frustrating technical failure. Streamlining the path for more efficient model execution helps ensure that high-performance AI is no longer a luxury reserved for those with the time to hand-tune every single line of code.

What is NVIDIA AITune and How Do You Speed Up PyTorch Inference?

What is the simplest way to explain NVIDIA AITune?

AITune is an open-source tool that automatically tests different engines for your AI model to find the fastest one for your specific computer setup.

Does using AITune replace backends like TensorRT?

No, it acts as a selector that helps you choose between existing backends like TensorRT or Torch Inductor to see which one performs best.

What is the difference between AOT and JIT tuning modes?

Ahead-Of-Time (AOT) benchmarks and saves your settings for permanent use, while Just-In-Time (JIT) tests things on the fly for quick experiments.

What equipment do I need to run this toolkit?

You will need an NVIDIA GPU, a Linux operating system, and a recent version of PyTorch installed on your system.

Why do different AI models need different backends?

Every model is built differently, and a backend that is fast for a text bot might be slow for a camera system within the integrated PyTorch compiler and optimization stack to prevent unnecessary fallbacks to slower execution paths.