Fresh insights from a study on QR-SimEval and C-MAPS published in Nature Sensors argue that mapping and localization reach new levels of reliability when simulation treats motion and perception as one unified loop. Historical development cycles typically addressed movement and vision as isolated engineering hurdles, yet this research confirms that synchronization is vital for navigating erratic environments.

General audiences often associate quadruped robots with viral acrobatic displays, though the critical innovation lies in their environmental perception. Legacy initiatives, such as Astro’s sensor-heavy robot dog concept, exposed a persistent challenge: machines can achieve mechanical fluidity while remaining functionally blind to their surroundings.

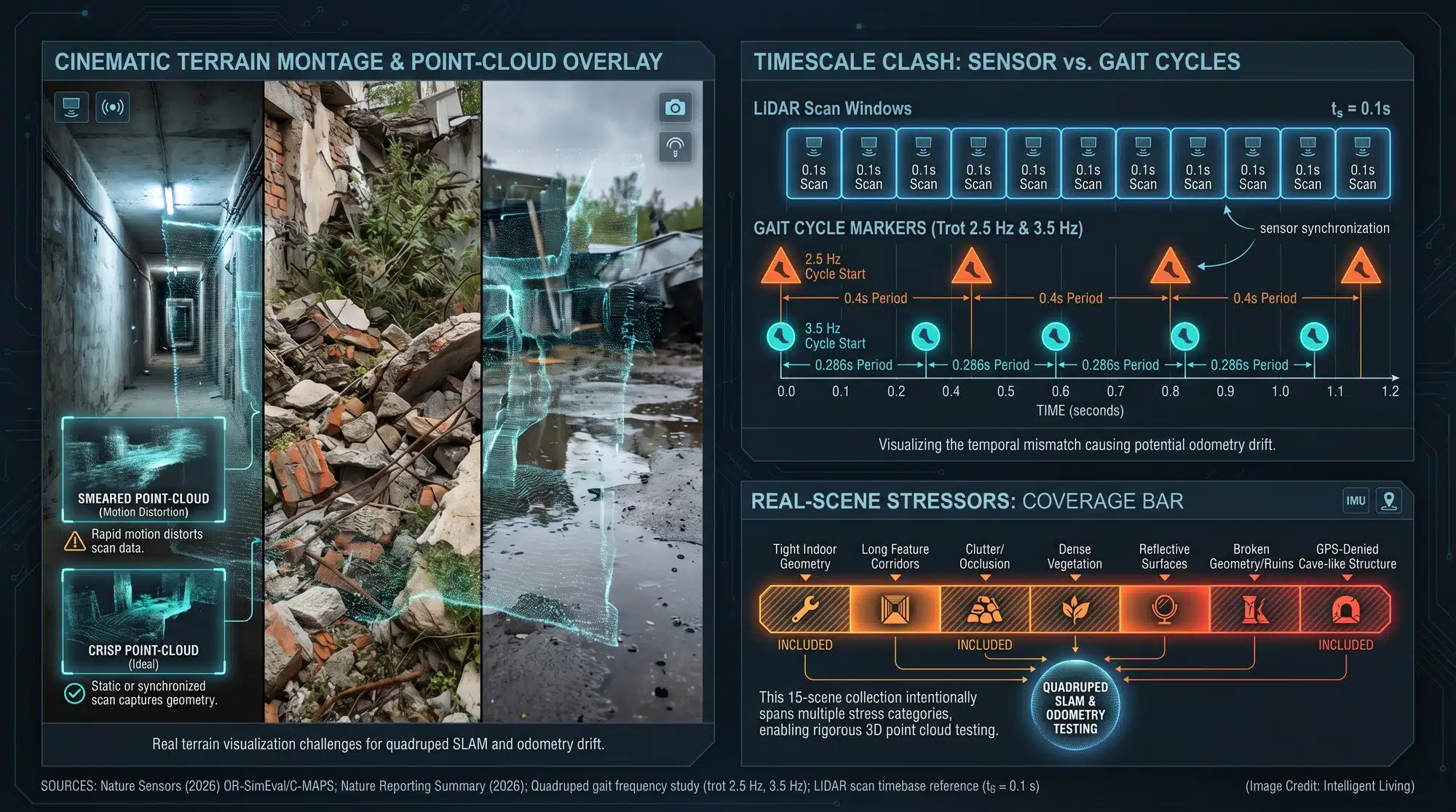

Cracked pavement and uneven surfaces generate significant digital interference during navigation. Minor physical shuffles can degrade point clouds into flickering noise, rendering maps unusable. Solving this sensor noise is essential if we want these robots to move beyond laboratory floors and into the grit of real-world infrastructure.

Nature Sensors Analysis: Improving Robot Dog Mapping through Agentic Simulation

Core Research Summary: Motion and Perception Integration

Journal editors at Nature Sensors published a comprehensive research package, pairing a primary article with a companion commentary to clarify these complex technical concepts. The unified motion-plus-perception framework describes why mapping needs to be evaluated under the same jolts, slips, and timing quirks a walking robot actually experiences.

Principal findings suggest that QR-SimEval and C-MAPS simplify quadruped SLAM benchmarking under realistic stress, providing public code and data for independent validation.

Technical Highlights: QR-SimEval and C-MAPS Feature Overview

- Framework Release: Scientists introduced the QR-SimEval evaluation pipeline and the C-MAPS integrated mapping system to establish robust quadruped SLAM standards.

- Operational Focus: The research specifically addresses odometry drift and mapping inconsistencies caused by high-vibration legged locomotion.

- Benchmarking Scope: Experimental data covers nine unique SLAM implementations across 15 simulated environments, followed by rigorous real-world validation.

- Why the claim is testable: Establishing transparency, researchers hosted the official Zenodo DOI code archive for QR-SimEval alongside the live repository.

- What the numbers rest on: Research published in Nature includes source-data spreadsheets tracking ATE and RPE across 15 scenarios, allowing for transparent peer review. This shared data makes the comparisons significantly easier for external teams to audit.

- Field Utility: Enhancing mapping reliability makes quadruped platforms viable for hazardous inspection and environmental monitoring in areas inaccessible to wheeled vehicles.

Localization Challenges: Why Quadruped Robots Struggle in Messy Environments

The Gait Problem: Motion Distorts Sensors

Legged robot locomotion presents unique obstacles for modern perception stacks, primarily due to the mechanical noise inherent in walking. Walking gaits introduce specific physical artifacts that distort sensor data:

- Vibration: Constant rhythmic impacts rattle sensitive internal components.

- Viewpoint Changes: Rapid shifts in orientation complicate frame-to-frame matching.

- Nonuniform Motion: Unpredictable velocity changes can smear LiDAR points.

- Desynchronization: Jerky movements often desynchronize critical sensor arrays.

Engineers prioritize the mitigation of motion-induced errors to establish the foundational stability required for localization frameworks. Traditional SLAM frameworks often perform optimally when movement is linear and sensor timing is undisturbed. A quadruped does not cooperate with those assumptions, so even a robot that can keep its balance can still end up with a map that slowly drifts away from reality.

Everyday consumer technology provides a parallel through shaky smartphone videos recorded while running; the scene remains static, yet the data stream becomes erratic.

Why Classic LiDAR SLAM Assumptions Start to Break

Extensive modern 3D mapping frameworks originate from automotive-centric concepts, such as LOAM’s LiDAR odometry and mapping baseline, which define world-modeling through point cloud stitching. Legged locomotion introduces stochastic noise that invalidates traditional assumptions, requiring more resilient world-modeling protocols.

Mechanical pitching and vertical oscillations frequently mislead mapping pipelines, causing them to misinterpret motion artifacts as legitimate environmental features. Such confusion transforms odometry drift into a gradual slide that can lead a robot down the wrong corridor.

Blind Locomotion and the Perception Tradeoff

There is also a reason some legged designs leaned into “blind locomotion,” where the robot prioritizes balance and contact sensing over cameras and external perception. The story of MIT’s Mini Cheetah moving without vision captures the tradeoff: vision and LiDAR can be noisy, and relying on them can slow the robot down if the perception stack is not stable.

Operational observations reveal these technical tradeoffs through subtle hesitations, such as a robot pausing at a curb because conflicting sensor data obscures depth.

Outdoor Failure Modes, From Vegetation to Water Glare

Perception accuracy often degrades as robots venture beyond controlled laboratory settings into unpredictable field conditions. Perception pipelines frequently struggle when encountering these common outdoor obstacles:

- Snow and Vegetation: Shifting or dense surfaces create confusing depth readings.

- Reflective Water: Glare and transparency can derail LiDAR and camera data.

- Featureless Surfaces: Smooth walls or open fields offer few anchors for localization.

Research on perceptive legged locomotion in unstructured environments continues to highlight that environmental awareness remains the most brittle part of field robotics. A simple patch of tall grass can turn into a moving wall in the point cloud, and the robot has to choose between cautious navigation and fast navigation.

Why Pre-Mapping Still Shows Up in Practice

Commercial platforms frequently achieve viral success by operating in Spot’s pre-mapped autonomy constraint, hiding the inherent fragility of their localization stacks. One early insight from Spot’s pre-mapped autonomy constraint is simple: when the surroundings are not mapped ahead of time, localization becomes fragile and operators end up compensating.

Benchmarking Quadruped SLAM: Evaluating QR-SimEval for Reliable Motion Testing

Architectural Innovation: Developing the QR-SimEval and C-MAPS Framework

Operational testing via QR-SimEval transforms evaluation into a dynamic closed loop, moving beyond the limitations of static benchmarking. The project lists motion simulation and planning as distinct modules, with the open-source QR-SimEval code repository making the ‘agentic’ label concrete.

Modular architectural frameworks offer significant scalability, enabling developers to swap gait controllers or sensor models without extensive system overhauls. Mismatches in physics represent the ‘reality gap,’ a challenge addressed in research regarding robust sim-to-real transfer for quadruped platforms where timing discrepancies can derail performance.

Advanced evaluation cycles align with modern reinforcement learning architectures, where simulation-based stress testing precedes physical hardware deployment. The Eureka-style reward discovery approach for training robots is one recognizable example of how fast iteration becomes possible when the evaluation environment can be scaled.

Scalable benchmarking protocols are expanding into broader fields, particularly engineering workflows utilizing GPU-accelerated agentic AI for rapid parallel iteration.

C-MAPS: The Multisensor Mapping System they Benchmarked

Researchers benchmarked C-MAPS as a unified mapping architecture alongside several established industry baselines. In simple terms, it aims to fuse sensor streams so a robot can maintain a coherent 3D picture even when motion is rough and timing is imperfect. The key detail is not the acronym itself, but the promise that mapping gets treated as part of the motion problem, not a separate software module that only works on smooth floors.

Detailed technical documentation on C-MAPS results provides granular details on testing protocols and results.

Testing Methodology: Validating Quadruped SLAM Performance Metrics

Benchmarking results cover nine unique SLAM implementations across 15 simulated environments, followed by rigorous real-world validation. The methodological backbone is that the same motion profiles and sensor conditions are applied consistently across systems, which is what makes comparisons meaningful instead of anecdotal.

Trajectory accuracy metrics serve as the primary indicators of odometry drift in the research review materials. Absolute Trajectory Error and Relative Pose Error provide quantifiable measures, as noted in the peer review notes on ATE and RPE evaluation, to determine how well a robot maintains its path.

Field operations rely on these metrics because they determine whether a robot identifies a specific doorway or drifts into a parallel corridor.

Field Data and Scene Diversity

Dataset descriptions include 15 authentic scenes, further detailed in the research summary of field testing environments, captured between June and December 2024.

Environmental diversity remains essential for testing, as localization failures often originate from the cumulative impact of minor sensor discrepancies.

High-Value Applications: Real-World Use Cases for Quadruped SLAM Technology

- Infrastructure Inspection: Maintaining steady 3D scans in confined areas like bridges and tunnels allows quadrupeds to function as mobile sensor platforms. This reduces the need for human repositioning in hazardous inspection zones. Broader surveys of advanced multi-sensor fusion SLAM for 3D LiDAR underline why this localization problem keeps resurfacing across industries.

- Environmental Monitoring: Conservationists and environmental monitors require autonomous sensing tools capable of traversing uneven terrain for erosion and habitat tracking. That demand pattern shows up in systems like autonomous environmental cleanup platforms utilizing advanced navigation, where reliability matters more than a flashy demo. When localization holds, the useful part is repeat visits that produce comparable maps over time.

- Emergency Reconnaissance: Safety protocols in unstable or smoke-filled industrial zones benefit from early 3D mapping, which significantly reduces risk for emergency responders. The same safety-first logic appears in the Thermite RS3 firefighting robot, where harsh environments punish brittle autonomy. The difference between a helpful robot and a liability can be a single navigation mistake.

- Industrial Surveying and Digital Twins: Construction sites and mines change shape quickly, so the value of mapping is in frequent updates, not a single scan. This fits the simulation-first mindset in industrial digital twin workflows for factory-scale operations, where virtual replicas get updated to support planning, safety, and throughput. A robot that can re-map reliably turns digital twins into something closer to live telemetry.

- Health and Safety Monitoring: Hospitals and quarantine workflows highlighted why remote presence matters. Earlier experiments like contact-free vital sign checks using Spot show the practical constraint: navigation and localization need to be dependable in real buildings, not only in controlled demos. A hallway that looks the same at 2 a.m. can still confuse a map if the sensors drift.

- Utility and Rail Corridor Inspection: Long, linear assets like power lines and rail lines reward automation because human inspection is dangerous and slow. Related efforts such as autonomous drones for rail and utility corridor inspection illustrate the same core requirement: reliable localization plus reliable sensing. The best inspection workflows do not require a human to babysit every turn.

- Subterranean and Underground Exploration: Underground spaces push mapping to the limit because GPS is absent and sensors degrade fast. Technical reports on CERBERUS in the DARPA Subterranean Challenge shows how quickly navigation becomes a system-of-systems problem in tunnels, caves, and urban underground spaces.

Future Outlook: Validating Industrial Readiness for Quadruped SLAM Systems

Reality Check: What this Does and Doesn’t Prove Yet

Data-driven assertions indicate that mapping stability and drift resistance show measurable improvements under the newly proposed framework. The honest way to read that is bounded: the study reports strong performance within its simulated environments and collected scenes, using metrics that are widely accepted for odometry accuracy.

Critical technical constraints remain for field deployments, primarily regarding power management, thermal stability, and sensor maintenance. Successful autonomy relies on a synchronized stack of capabilities where the overall system is only as strong as its weakest link. The broader conversation about viral robot videos versus industrial readiness keeps circling the same lesson: repeatability matters more than a highlight reel.

Strategic Observations: Monitoring the Evolution of Legged Robotics

- Independent replications often utilize standardized robotic data logging formats to report where the approach holds up or breaks.

- Industry-wide advancement depends on legged-robot initiatives adopting motion-aware testing to evaluate perception under real gait dynamics.

- Market signals suggest that buyers in inspection and monitoring sectors prioritize localization reliability over raw athletic performance.

Mastering the Path Ahead: Why Agentic Simulation Redefines Field Exploration

Implementing agentic simulation builds a rigorous engineering culture where autonomy is validated against extreme physical stress before field deployment. Stressing these systems under realistic gait dynamics ensures that mapping stays solid when the stakes are high. As the global sensor supply chain lowers the barrier to entry—aided by China’s automotive LiDAR price collapse—this high-end 3D sensing hardware is becoming a standard tool for legged robots.

Industry experts envision quadrupeds assuming hazardous or repetitive roles, effectively removing humans from high-risk environmental tasks. Transitioning quadruped SLAM from a laboratory curiosity into a dependable field tool opens doors for deeper environmental monitoring and safer infrastructure surveys. When a robot dog can map a cave or a collapsing building without losing its way, the technology finally meets the potential we’ve all been waiting for.

Essential Insights: Quadruped SLAM and Agentic Simulation FAQ

How Do Robot Dogs Map Difficult Terrain?

Robots employ localization logic to build comprehensive world models of unfamiliar spaces while simultaneously tracking their internal coordinates. Quadruped systems use LiDAR and cameras to build 3D world models through a process called Simultaneous Localization and Mapping.

Why Do Robot Dogs Lose their Place While Walking?

Legged motion is inherently jerky, which introduces vibrations and sensor noise that can lead to odometry drift. If the mapping software cannot distinguish between robot movement and actual terrain changes, the world model becomes distorted.

What Makes Agentic Simulation Better for Testing?

Agentic evaluation loops enable engineers to validate perception and gait controllers as a single, integrated system under realistic stress. Testing perception under realistic quadruped dynamics helps close the ‘reality gap’ that often derails hardware performance.

Can Robot Dogs Use LiDAR to Map Caves?

Yes, quadruped SLAM is particularly effective in GPS-denied environments like caves or tunnels. Integrated systems like C-MAPS fuse sensor data to maintain clear navigation even when lighting is poor and terrain is unstable.

How Does Motion Affect Robot Sensors?

Physical oscillations from uneven gaits generate rapid viewpoint shifts that often smear LiDAR data and desynchronize sensor arrays. Proper simulation ensures that the mapping pipeline stays stable despite the physical chaos of quadruped movement.