DeepSeek’s latest V4 model running on Huawei Ascend hardware marks a definitive pivot in the global AI software stack. While headlines focus on how DeepSeek-V4 was adapted specifically for Huawei chips, the real story lies in the software reality check for Ascend 950-based clusters. If a massive, modern model can train without the NVIDIA CUDA ecosystem, the competitive map for AI infrastructure shifts from policy debates to measurable engineering metrics.

The success of this deployment hinges on whether Huawei’s CANN stack can handle complex distributed training and low-precision math well enough that real-world products stay responsive. This transition tests if China’s inference stack can finally achieve a stable CUDA exit, proving that hardware independence is possible when software compilers and runtime controls are tuned for peak efficiency.

Reducing dependence on CUDA has long been a strategic objective for China. The practical challenge is more immediate: Huawei’s CANN stack must now prove it can manage compilers, runtime control, and distributed training without slowing down real-world products. The idea of a successful CUDA exit for China’s inference stack is now getting tested the way platforms always get tested: by whether they stay steady when everyday demand hits at the same time.

DeepSeek-V4 on Huawei Ascend: Proving the CUDA Exit Strategy

AI Stack Baseline: CANN vs CUDA and DeepSeek-V4 Deployment Metrics

CANN, CUDA, FP8, and FP4 might seem like alphabet soup at first, but the technical stakes for AI infrastructure are actually easy to grasp. These quick facts map the story to concrete parts of the stack such as compilers, communication libraries, and data-center limits.

- DeepSeek previewed V4 and described it as adapted for Ascend hardware, with Ascend 950-based clusters positioned as the target deployment.

- CANN is Huawei’s software stack for Ascend NPUs, covering graph compilation, operators, runtime, and cluster communication.

- CUDA is an entire software ecosystem, not just a programming model, and its depth is why CUDA lock-in is so stubborn.

- Low-precision formats like FP8 and FP4 reduce bandwidth pressure, which often matters more than raw compute when prompts get long.

- Power Usage Effectiveness links data center performance to energy overhead by comparing total facility power to IT power.

Chip performance remains secondary to software predictability; the real battle centers on whether a stack can maintain cluster stability under load. Performance bottlenecks often stem from background operations such as memory orchestration, launch overhead, and device-state synchronization.

DeepSeek-V4 Adaptation: Kernel Alignment and Cluster-Scale Deployment on Ascend 950

This adaptation marks a fundamental realignment of infrastructure expectations, proving that large models can thrive outside the CUDA-first ecosystem. DeepSeek previewed V4 and said it was adapted to run on Ascend hardware, with Ascend 950-based clusters described as the intended environment. That phrasing implies that kernels, memory movement, and distributed collectives were aligned with Huawei’s toolchain instead of the CUDA-first assumptions that dominate most large language model deployments.

At the cluster level, liquid-cooled Ascend supernodes capable of scaling across thousands of nodes raise the bar for software reliability. Performance losses typically stem from wasted bandwidth and communication stalls when thousands of accelerators must operate as a unified machine.

Everyday workflows reveal the shift. A customer support chatbot requiring fast time to first token may now be evaluated on Ascend’s performance during high-stakes prefill stages.

Similarly, a long-context research workflow may start asking whether graph compilation and operator fusion stay consistent across updates. This shift moves the focus away from single GPU benchmarks that only look good for a week.

Infrastructure Competitiveness: CANN Software Capabilities vs. the CUDA Ecosystem Moat

CANN Architecture Defined: The NPU Software Foundation for Ascend Processors

CANN as a Compiler Backend: Bridging High-Level Models and Ascend Silicon

CANN is the software layer that translates model code into something Ascend processors can execute. Without it, the chip is just silicon. With it, Ascend becomes a programmable accelerator with a full pipeline for compiling graphs, running kernels, and orchestrating data movement.

Python-based models do not inherently translate into hardware instructions, creating a gap that the CANN stack must bridge.

Something has to turn ‘attention,’ ‘matrix multiply,’ and ‘layer norm’ into kernels, then schedule those kernels while moving data between HBM, caches, and device memory. This complex coordination defines the daily output of a stack like CANN.

Core CANN Components: Graph Compilation, Operator Libraries, and NPU Runtime

The CANN stack that powers Ascend NPUs lays out the major pieces: graph compilation, operator libraries, runtime execution, and the tools that glue them together. For teams building applications, the AscendCL application development layer is the API surface that connects a host program to device execution, including streams, memory, and kernel launches.

Imagine a high-volume kitchen during a dinner rush; without a shared plan, cooks work at cross purposes and resources are wasted. Neural network execution faces similar risks, requiring graph compilation to synchronize every operation.

Low-Level Development: Ascend C Kernel Programming and Memory Tiling APIs

When built-in kernels are not enough, custom work begins. The Ascend C kernel and tiling APIs describe how operators get registered and how tiling and data layout decisions are expressed so the device can stay fed without choking on memory traffic. That level of detail is why a stack like CANN is not just a branding layer; it is where performance decisions become code.

NVIDIA CUDA Ecosystem Dominance: Software Maturity and Developer Lock-In

NVIDIA Runtime vs. Driver APIs: Decades of Battle-Tested Tooling Maturity

CUDA functions as a layered ecosystem rather than a single feature, encompassing runtime APIs, driver-level control, and mature math libraries.

The difference between the driver and runtime APIs makes CUDA difficult to replace. It provides both high-level convenience and the surgical control required in production environments to resolve edge-case performance regressions.

cuDNN and Optimized Math Libraries: Encoding Global Performance Standards

CUDA’s library depth is the second moat. A mature kernel layer shows up in the graph API and operation fusion model used by cuDNN to choose kernels, fuse operations, and manage heuristics at scale. When a model “just works” on new GPU generations, the experience feels seamless. This smoothness results from library teams spending years refining common patterns into well-tuned defaults.

Distributed Scaling: NCCL Collectives and PTX Compiler Efficiency

Distributed training is the third moat. The NCCL collectives used for multi-GPU clusters describe how primitives like AllReduce and AllGather coordinate across devices, and those primitives have been tuned for years across hardware generations. Another sticky layer is how production stacks lean on PTX compiler APIs for runtime compilation and caching, minimizing setup costs for repetitive kernels.

Switching stacks proves expensive because the true cost involves more than simple code migration. Real-world developers rely on years of trust in profiling tools, debugging clarity, and the ability to reproduce consistent results across drivers and cluster fabrics.

Architectural Comparison: CANN vs. CUDA Programming and Runtime Layers

AI Stack Anatomy: Comparing Compilation, Runtime, and Cluster Scaling

Most comparisons get stuck at marketing words. A more useful comparison walks the same ladder both stacks must climb: programming surface, compilation, runtime scheduling, and cluster communication.

CUDA maintains momentum because its ladder is already familiar to millions of engineers, with most modern tools assuming it as the default.

CANN now climbs that same ladder in a separate ecosystem. Its primary goal is transforming Ascend clusters into routine infrastructure rather than exotic hardware.

Engineers troubleshooting system slowdowns typically climb a specific technical ladder. This layer map matches the debugging path for real-world AI systems:

- Programming Surface: How code expresses work, from framework operators to custom kernels.

- Compilation and Graph Capture: How a graph is compiled, fused, and prepared for execution.

- Runtime and Memory Scheduling: How streams, memory transfers, and synchronization are coordinated.

- Communication and Scaling: How collectives and topology choices keep many devices moving together.

- Tooling and Profiling: How teams measure bottlenecks and fix them without guessing.

Effective middle layers optimize system performance by aggressively managing launch overhead. Workflows built around CUDA graphs with explicit dependencies can cut overhead by capturing a whole sequence once and replaying it, which is the kind of operational muscle memory that makes a platform feel effortless over time.

CANN Model Execution Pipelines: Graph Mode and Operator Performance

Computation Graph Mode vs. Single-Operator Execution Patterns

Graph mode is easiest to understand as “optimize the whole route before driving.” In graph mode versus single-operator mode, CANN support two execution styles, and graph mode is the one built for large, repeated workloads.

In graph mode, the system represents the full network as a unified computational graph. This global view enables operator fusion and strategic scheduling for improved memory reuse across parallel streams. Single-operator mode is closer to executing one block at a time, which can be useful for certain workflows, but graph mode is generally where large, complex models get their efficiency, especially when the work is bandwidth-bound.

Runtime Memory Scheduling: Streams, Event Synchronization, and Prefill Stalls

Streams are the lanes on the highway, and synchronization is what prevents a pileup. With inter-stream synchronization using events, a stream records a completion point and another stream waits on it, keeping ordering explicit.

System latency often originates from coordination errors rather than hardware compute limits, manifesting as noticeable lags during prefill stages. This manifests technically when a voice assistant processes short prompts instantly but stalls on long-context transcripts or complex attachments. At that moment, coordination and memory movement become the real bottlenecks, especially when no implicit device or stream synchronization forces the code to make ordering explicit.

Huawei HCCL: Topology-Aware Collectives for Large-Scale Distributed Training

When training spreads across many accelerators, communication becomes the timekeeper. HCCL, Huawei’s collective communication library, automatically selects topology algorithms like Ring, Mesh, and recursive halving-doubling to match specific message sizes.

In distributed training, these collectives decide how efficiently gradients synchronize across devices. The open HCCL codebase breaks the library into modules and algorithms, which is useful context when performance questions turn into debugging work.

AI Inference Metrics: Low-Precision Formats and Market Adoption Signals

Low-Precision Arithmetic: Technical Deep Dive into FP8 and MXFP4 Quantization

FP8 Format Standards: Balancing Range and Precision with E4M3 and E5M2

Low-precision arithmetic is central to modern AI performance because it reduces memory bandwidth and energy cost per token. The FP8 formats E4M3 and E5M2 show why two formats exist and how exponent and mantissa bits trade range for precision.

Allocating more bits to the exponent extends numerical range, while prioritizing the mantissa preserves finer precision detail. That tradeoff matters when weights and activations swing between tiny and huge values across different layers.

MXFP4 Microscaling: Block Scaling Efficiency for the E2M1 Format

The Open Compute Project’s microscaling specification defines MXFP4 as a block-scaled FP4 format using E2M1 with an E8M0 scale, which is a way of packing values far more tightly while keeping accuracy usable through shared scaling.

The key idea is that values get grouped into blocks that share a scale. That shared scale helps FP4 behave like something more stable than raw 4-bit numbers would suggest, especially in inference settings where memory bandwidth can dominate runtime.

Bandwidth Optimization: How FP8 Precision Reduces Long-Context Prefill Latency

For long prompts, prefill operations dominate the time to first token. These operations depend heavily on efficient memory movement and cache behavior. In practice, utilizing FP8 as a high-efficiency, cost-effective precision choice is often about squeezing bandwidth and power without breaking accuracy.

The way Ascend 950PR and Atlas 350 manage the prefill-versus-decode split demonstrates how low-precision formats maintain responsive latency. User experience often reflects these technical tradeoffs; a tool might feel instant for short queries but lag significantly the moment a long conversation history enters the input box.

Infrastructure Adoption: Deploying DeepSeek-V4 on Production Clusters

Software Integration Metrics: Stable Version Matrices and Performance Consistency

Real-world adoption manifests through the steady accumulation of working integrations and reproducible performance metrics across stable version matrices. The tell is whether a team can deploy, monitor, and upgrade without relearning everything each quarter.

Compatibility with existing serving stacks serves as an operational benchmark for ecosystem health. The Ascend backend support in vLLM shows how a widely used inference server can be adapted to Ascend hardware in a way that is operational rather than theoretical.

Cross-Hardware Portability: vLLM and ONNX Runtime CANN Execution Providers

Enterprise deployments face strict portability constraints. Consequently, model-serving pipelines are judged on their ability to move seamlessly between different hardware environments. The ONNX Runtime CANN execution provider for portability points to a deployment path that matters in settings where the runtime is shared across teams and products.

Benchmark discipline is another reality check. Implementing automated PyTorch benchmarking to ensure repeatable tuning establishes the kind of disciplined workflow that turns an architecture argument into repeatable numbers a team can trust, especially when performance must be defended in change-control reviews.

Production Stability: KV Cache Compression for Predictable Long-Context Latency

When long prompts hit a memory wall, techniques for compressing the KV cache can change concurrency without changing the model. Users benefit directly from these optimizations through fewer stalls, reduced request drops, and predictable latency during busy hours.

Ecosystem maturity builds through consistent performance over multiple cycles. Engineers require profiling confidence, clear error reporting, and sufficient operator coverage to keep models on fast execution paths.

Collecting AscendCL, runtime, and GE traces in MindStudio provides the practical instrumentation needed for performance audits. This data is the starting point for resolving sudden slowdowns in live environments.

7 Operational Impacts: How a CUDA Exit Reshapes the AI Product Lifecycle

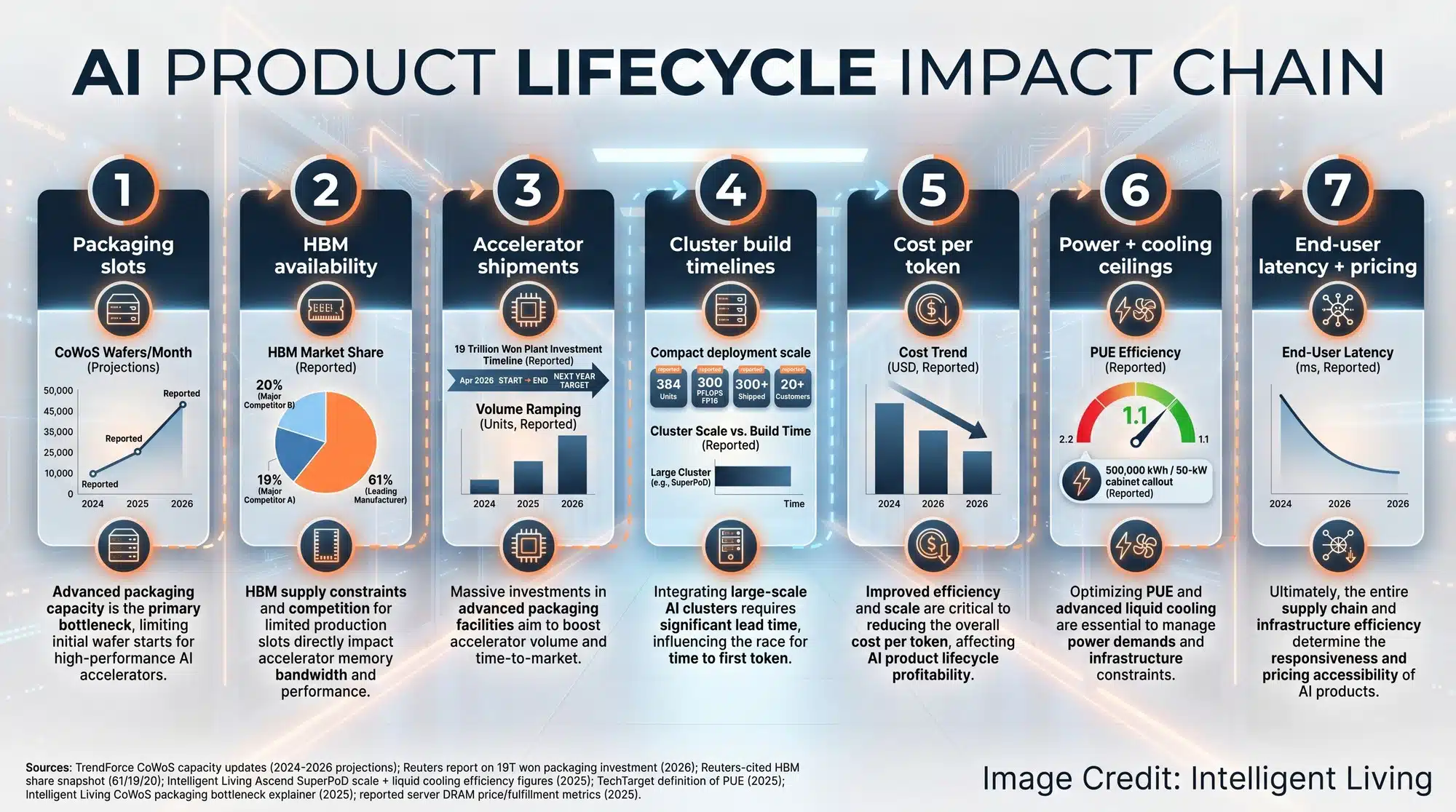

Infrastructure shifts aren’t just technical; they reshape procurement budgets and daily user experiences. These seven impacts show exactly how a CUDA exit changes the AI product lifecycle:

- Lower cost per token becomes more plausible when hardware and software can be sourced and tuned locally, and analyzing the fundamental factors shaping the cost of AI compute reveals how packaging and materials quietly shape that price in real deployments.

- Hardware availability can improve when packaging capacity stops gating shipments, and the ongoing bottlenecks in CoWoS advanced packaging often decide whether paper specs become shippable systems.

- Consumer hardware pricing can feel the ripple effects when AI datacenter memory demand squeezes supply chains, and the ripple effects of the HBM squeeze on DDR5 supply can show up as higher prices and longer lead times.

- GPU backorder cycles can stay volatile until memory stacking and final test capacity expand, and the strategic SK hynix PT7 advanced packaging expansion highlights why final assembly capacity is now part of the AI roadmap.

- Data center power ceilings increasingly decide what is feasible, and adopting water-efficient cooling strategies for modern AI facilities highlights why PUE is only part of the story.

- The host CPU layer can shift independently of the accelerator stack, and enabling CUDA host support on RISC-V architectures makes the split clear between an open host CPU and a proprietary accelerator stack.

- Long-context performance often hinges on prefill work rather than decoding, and utilizing IndexCache to reduce the prefill latency tax on long contexts connects long prompts to the kind of latency users notice in everyday tools.

One conclusion stands out: long-term market leadership belongs to the stack that optimizes total cost of ownership and ensures operational reliability. If CANN can keep shrinking the gap in tooling and kernel coverage, the “default” assumption in parts of the market may start to split.

Competitive Trajectory: Open Source Governance and the Future of CANN vs. CUDA

The DeepSeek-V4 deployment establishes a technical precedent, shifting the focus toward verifiable performance metrics on Ascend hardware. Momentum also rises when CANN operators and core components move under open-source governance, because portability and bug-fixing speed start to depend on shared tooling instead of private roadmaps.

On the physical infrastructure side, integrating 800-volt HVDC GaN power stages into AI racks is a reminder that software competitiveness is inseparable from power delivery, cooling headroom, and what a facility can actually sustain at scale.

True success for Huawei’s CANN stack depends on production uptime and the quiet stability of daily traffic rather than high-profile announcements. Engineers need to see if Huawei can maintain consistent operator coverage and competitive cost per token without falling into the ‘slow paths’ that plague unoptimized hardware. When time to first token stays low even during peak demand, the industry will know the software moat around NVIDIA is finally being breached.

Infrastructure trust is earned through transparent benchmark configurations and debugging tools that provide deterministic failure analysis. As CANN moves toward open-source governance, the speed of bug fixes and operator updates will decide if Ascend becomes a routine choice for developers. The ultimate test remains simple: can the stack explain a failure without guesswork while keeping the user experience seamless across every driver update?

Expert FAQ: Key Insights into DeepSeek-V4, Huawei Ascend, and CANN Deployment vs. CUDA

How does DeepSeek-V4 optimize for Huawei Ascend?

DeepSeek-V4 utilizes Huawei’s CANN stack to align kernels and memory movement with Ascend NPUs, moving away from CUDA-centric assumptions to maximize hardware-specific bandwidth.

What is the significance of the CUDA exit for China?

A successful CUDA exit means China’s AI labs can train large models on domestic hardware like the Ascend 950 without relying on restricted NVIDIA software or hardware.

Can CANN handle distributed training as well as NCCL?

CANN uses HCCL (Huawei Collective Communication Library) to manage distributed training, employing topology-aware algorithms like Ring and Mesh to synchronize gradients across thousands of accelerators.

What role do FP8 and FP4 play in AI inference?

Low-precision formats like FP8 and MXFP4 reduce bandwidth pressure and power consumption, allowing for cheaper, faster inference and better “time to first token” during long-context prompts.

Is vLLM compatible with Huawei Ascend hardware?

Yes, vLLM’s Ascend backend support allows developers to deploy large language models on Ascend clusters using the same serving infrastructure common in CUDA environments.