OpenAI recently accelerated workplace-ready automation by upgrading its Agents SDK, allowing AI agents to execute complex tasks within secure operational boundaries. The update introduces secure environment isolation and model-native coordination protocols designed to manage long-running workflows with surgical precision. This approach transforms enterprise tasks into auditable processes that significantly lower the risk of unauthorized system access.

Industry requirements have shifted as AI agents evolve beyond simple chat interfaces that provide singular responses. In typical workplace environments, these systems perform several critical actions:

- Reviewing internal documents for accuracy

- Executing system commands and API calls

- Verifying results through cross-referencing

- Maintaining task continuity across sessions

Payroll managers reconciling complex spreadsheets and medical staffers organizing intake forms both require AI assistants that prioritize factual accuracy over speculative reasoning.

Quick Facts: OpenAI Agents SDK Sandbox Execution, Guardrails, and Tracing

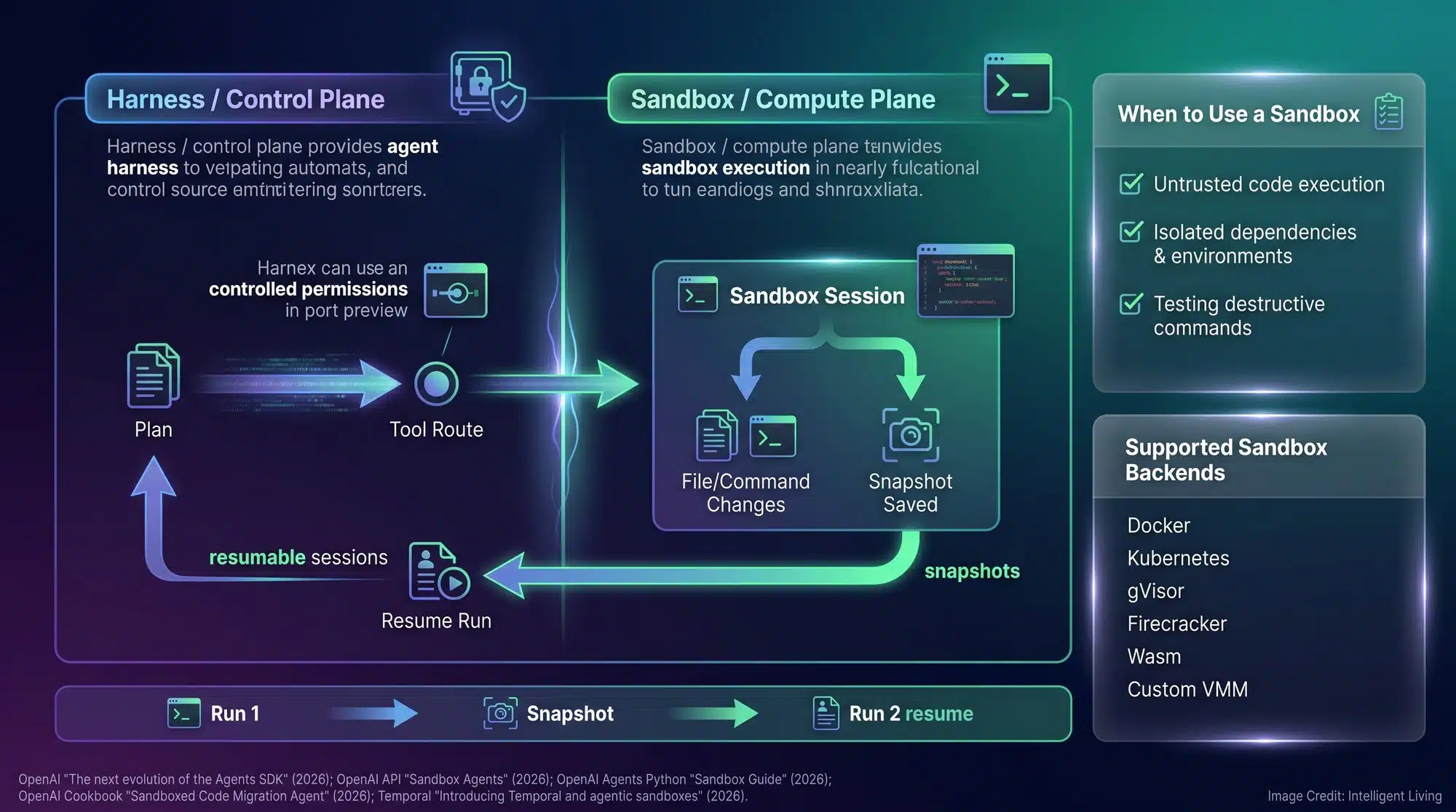

The latest SDK enhancements prioritize underlying infrastructure, enabling enterprise AI agents to manage sensitive files, credentials, and strict deadlines within a protected environment. The underlying architecture anchors on three functional requirements: isolating execution environments, enforcing tight permission scoping, and maintaining full traceability for every agent’s action.

- Sandboxed execution environments contain file and command workflows so mistakes do not spread beyond the approved workspace.

- A stronger agent harness supports long-horizon tasks without brittle glue code, helping the agent keep state across many steps.

- Tracing that records tool calls and handoffs creates an audit-ready timeline, useful when a run spans hours and the outcome needs an explanation.

- Approval gates can pause sensitive actions before they cause side effects, such as writing to a database or sending a message.

- A sandboxed code migration workflow shows a common pattern where orchestration stays outside the sandbox while commands and file edits remain isolated.

Taken together, these changes push agentic AI closer to the way serious software is deployed: least privilege, observable behavior, and a clean separation between planning and execution.

Inside the OpenAI Agents SDK Update: Harness Control and Sandbox Execution

Advancing Agent Orchestration: Resilience and Long-Horizon Task Support

The latest enhancement builds upon the foundational tools for autonomous agent development that established orchestration as a primary developer pathway. OpenAI now emphasizes production-grade resilience, ensuring agents can survive system interruptions and tolerate untrusted inputs. Reliable enterprise systems must generate verifiable evidence of their actions throughout the entire execution lifecycle.

Developers utilize a primary orchestration loop to coordinate planning and tool execution, maintaining progress until task completion or a required human intervention.

The agent harness operates as this critical control layer, seamlessly managing memory, tool calls, and handoffs during active sessions. By centralizing these orchestration tasks, the harness allows agents to recover from interruptions without losing progress on complex objectives.

Understanding Sandbox Execution: Secure Isolation for High-Stakes Operations

Sandbox execution offers a straightforward but vital security safeguard: providing AI agents with a strictly isolated workspace for all operational activities.

Sandbox sessions that can pause and resume keep the agent’s working files and commands inside a sealed environment while allowing a workflow to stop for review and continue later. The agent can read approved files, run approved commands, and produce outputs, while the rest of the system stays protected.

Task isolation protects system integrity when workflows involve potential side effects. Accounting departments, for example, leverage these environments to handle high-volume invoice processing against rigid deadlines. These secure environments ensure agent actions remain confined to specific folders and pre-approved command sets, effectively preventing accidental data exfiltration or system-wide configuration errors.

Sandboxing also makes it easier to test agent behavior in a repeatable way. When a team can replay the same setup and confirm the same outcome, it is easier to spot drift, permission creep, or a workflow that quietly started doing more than it should.

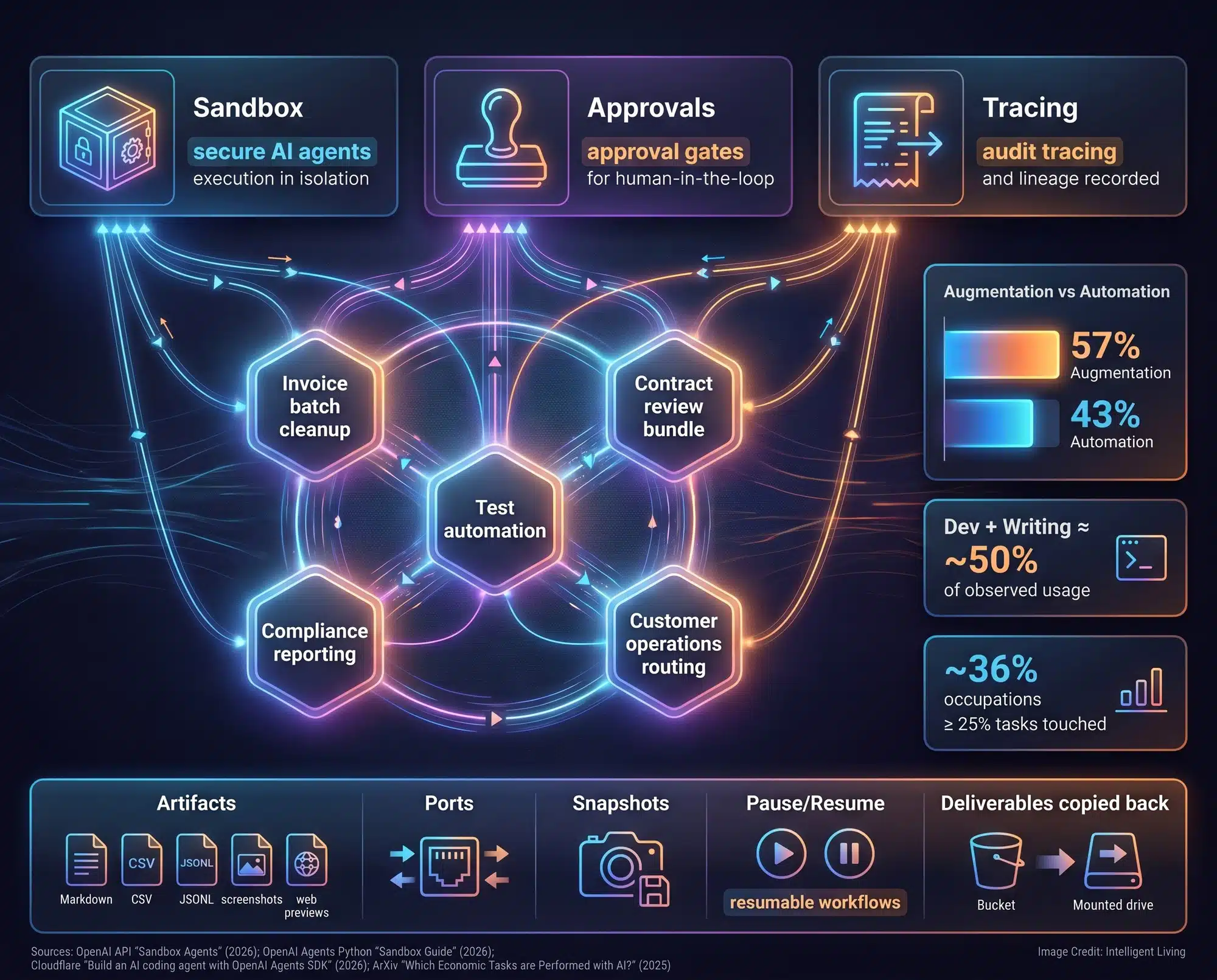

Enterprise Governance: Implementing Input Guardrails, Action Approvals, and Audit Tracing

Granular control mechanisms form the bedrock of corporate AI integration, carrying more weight than raw processing power in production settings.

Risk Mitigation Strategies: Mandatory Approval Gates for System-Level Changes

Approval gates for side-effect actions separate harmless reasoning from steps that change systems. Guardrails can validate inputs and outputs, such as blocking unsafe content or preventing certain tool calls. Approvals add a deliberate pause before actions with real consequences, like sending messages, writing to a database, or modifying files.

Modern agent workflows now align with rigorous operational standards by separating harmless reasoning from impactful system changes. A finance team might allow the agent to read invoices and draft a reconciliation report, then require approval before anything is posted to a ledger. A software team might let an agent run tests and propose a patch, then require a human sign-off before changes are merged.

Auditable Execution: Transforming Trace Logs into Verifiable Compliance Data

Operational transparency acts as a vital foundation for the SDK update, translating abstract agent behaviors into concrete, verifiable data trails. This infrastructure provides the necessary visibility for high-stakes enterprise deployments.

Comprehensive traces log every tool call and workflow handoff, creating a chronological timeline of specific events. This record details which tools were utilized and identifies exactly when a session encountered a safety safeguard.

Strict compliance environments prioritize auditable automation to prevent AI deployments from becoming organizational liabilities. When questions show up later, the trace helps answer them with concrete events rather than vague guesses.

Vulnerability Management: Defending Against Prompt Injection and Unauthorized Access

Security professionals know that research into prompt injection vulnerabilities within agentic systems demonstrates why strict permission scoping and manual approvals are necessary to mitigate risk in conversational automation. Connecting agents to external tools amplifies these vulnerabilities, as a single malicious instruction can escalate into unauthorized system-level changes or data access.

Secure deployment requires proactive safety measures. Built-in safety protocols for tool-using agents focus on limiting system access, requiring confirmation for high-stakes actions, and maintaining exhaustive logs.

Quantifying Reliability: Structured Trace Grading for Performance Benchmarking

For organizations that want to quantify reliability, trace grading that scores an end-to-end agent run turns raw logs into structured checks teams can track over time. It is the difference between saying an agent seems stable and proving that it stays stable across repeated runs.

Developers utilize proof-style structured prompts to transform code reviews into verifiable evidence, effectively separating speculative explanations from confirmed execution steps.

Strategic Impact: Regulated Industries and DevOps Teams Leading AI Adoption

Regulated departments and technical teams currently automating knowledge work stand to benefit most, particularly where complex documents, sensitive code, and strict compliance intersect.

Compliance-Driven Workflows: Audit Trails for Finance, Legal, and Healthcare

Healthcare operations, finance, and legal workflows are natural fits because they are document-heavy and accountability-heavy. When an agent can summarize records or draft standardized reports, the value is real, but so is the risk. Sandboxing and traceability help these teams keep automation inside acceptable controls.

Production-Ready DevOps: Secure Test Automation and Deployment Guardrails

Software teams running tests and business operations teams processing routine paperwork will find that SDK’s enterprise-scale deployment capabilities frame the update as a major move toward production-ready business agents. This framing is backed by industry analysis showing that the safest agents are those built for scalability and auditability.

These enhancements address recurring vulnerabilities identified in recent security incidents, where unmanaged automation multiplied risks during dependency or permission failures. Documented security risks in automated coding supply chains illustrate how compromised components can move through pipelines with unprecedented speed. Recent instances involving malicious package releases targeting enterprise dependencies highlight why credential hygiene and dependency pinning are non-negotiable.

Similar guardrails apply beyond coding; vulnerabilities in trusted CI/CD scanning tools demonstrate how implicit trust assumptions can break in unexpected areas.

Scalable Business Operations: Implementing Bounded Automation for Internal Workflows

Operational departments and growing enterprises utilize AI workflows to automate high-volume intake processing, performance reporting, and customer engagement. These systems provide a scalable foundation for modern business automation.

Secure sandboxing allows teams to deploy automated workflows without the risk of isolated errors impacting broader organizational systems. This containment strategy ensures that initial experimentation does not compromise data integrity or system stability.

Long-running agents introduce another pressure point: memory. Data governance challenges in persistent AI memory emphasize the need for robust audit logs as agents retain sensitivity across multiple sessions.

Operational Use Cases: Integrating Secure AI Agents into Everyday Business Workflows

As agentic AI moves from experiments to everyday tools, the most common deployments will focus on repeatable workflows and narrow permissions. Rising regulatory and governance standards for agentic ecosystems explain why permission limits and auditability are becoming essential for scaling AI safely.

Productivity gains will manifest most clearly in back-office operations, where automating repetitive processing tasks generates measurable time savings and operational efficiency.

- Contained document processing for contracts, invoices, and internal reports, with access restricted to a specific workspace.

- Automated testing and code checks that run inside isolated environments before changes touch production systems.

- Compliance evidence capture through trace logs that show exactly what actions occurred and in what order.

- Approval-gated actions for any step that could send data outward or modify sensitive records.

- Scoped tool connections that limit what an agent can do across apps, guided by MCP permissions and connector boundaries while keeping access narrow.

Safety infrastructure functions as a performance enabler rather than a restriction. Automated workflows accelerate when verification steps are standardized, and comprehensive tracing allows human operators to pinpoint the exact moment of any execution variance.

The Future of Verifiable AI: Establishing Trust-by-Design in Corporate Agent Ecosystems

The OpenAI Agents SDK update establishes a new benchmark for production-grade agent ecosystems by prioritizing risk containment and verifiable oversight. Strategic frameworks for AI transparency and accountability clarify why user control and visibility directly influence the long-term success of automated tools.

As agentic workflow patterns converge across the industry, comparative research into enterprise-scale agent orchestration models confirms that the most reliable systems prioritize strict permissions. Industry standards now dictate that reliable AI systems must operate under strict permission scoping and transparent oversight to ensure enterprise-grade safety.

Essential FAQ: Understanding the OpenAI Agents SDK Update

How does sandbox execution improve AI agent safety?

Sandbox execution isolates an agent’s files and commands within a sealed environment, preventing errors from affecting external systems or data.

What is the role of the model-native agent harness?

The agent harness acts as a control layer that manages memory and tool calls, allowing agents to maintain state during long-running tasks.

How do approval gates work in the OpenAI SDK?

Approval gates create a human-in-the-loop checkpoint, requiring manual verification before an agent performs high-stakes actions like modifying databases.

What data is captured in OpenAI agent tracing?

Tracing captures a chronological record of tool calls, model interactions, and handoffs, ensuring full transparency for organizational compliance audits.

Can the Agents SDK prevent prompt injection?

While no system is immune, the SDK’s permission scoping and guardrails significantly reduce the “blast radius” and impact of potential injection attacks.