If the cloud feels like an invisible digital layer, the monthly electricity bill serves as a sharp reminder of the physical world. As high-compute AI platforms and generative video tools go mainstream, the sprawling server clusters powering these models are siphoning vast amounts of power from local grids—often in unsuspecting rural communities. This surge in compute intensity is forcing a major federal pivot, as regulators realize that local grid infrastructure must adapt to a new era of industrial-scale digital demand.

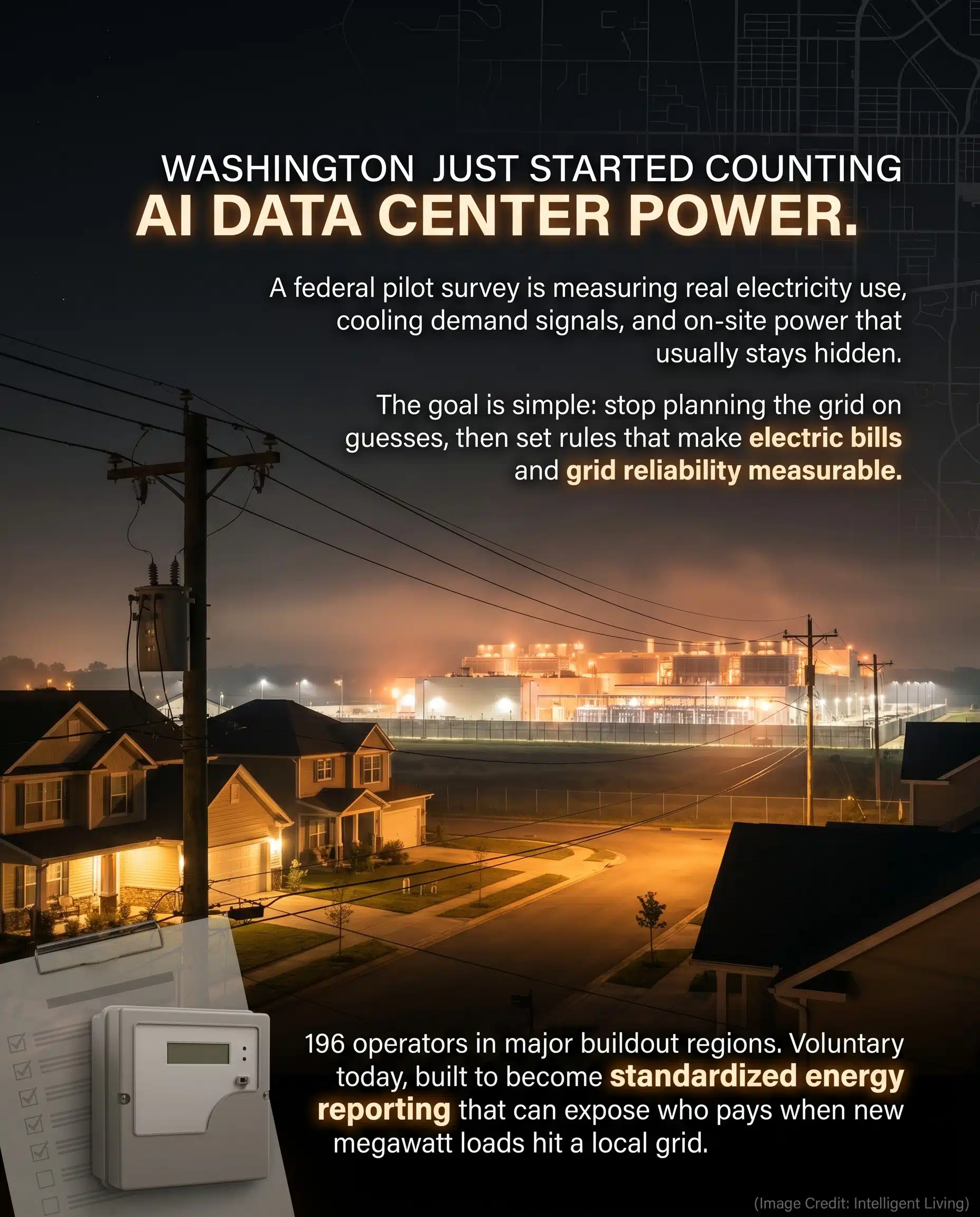

State regulators are scrambling to align rapid AI expansion with affordable energy rates, treating grid stability as a critical economic safeguard. The government’s new federal data center power assessment represents a straightforward shift toward transparency. Instead of relying on scattered estimates, federal agencies are moving to measure what these facilities actually consume so they can build fair rules based on hard data. Balancing the rapid growth of artificial intelligence with stable energy prices for residents is now a primary focus for planners from Augusta to Washington, D.C.

Tracking AI Data Center Power: The EIA Energy Reporting Roadmap

The Launch of Federal Data Center Power Pilot Studies

State-level energy experts are watching closely as the U.S. Energy Information Administration rolls out three voluntary pilot studies targeting high-growth zones in Texas, Washington, and the Northern Virginia corridor. These pilots track 196 companies to measure site-level electricity demand and cooling efficiency, revealing exactly why a facility’s power draw fluctuates or remains constant.

Federal officials aren’t making a political statement here. They are following a strict policy‐independent energy statistics mandate to gather reliable metrics before proposing new, data-driven industry standards.

The path forward is clear. Senators Warren and Hawley describe these initial tests as a stepping stone toward standardized national energy reporting once the trial period ends.

Quick Facts: EIA Data Center Energy Survey Timeline and Maine 20-Megawatt Pause

Distinguishing current voluntary trials from future mandatory rules clarifies why state-level bans can happen alongside federal data collection. The overall pattern is simple: data centers are becoming large, predictable electricity users, and both regulators and communities want numbers that are comparable across projects.

- The pilots began March 25, 2026, and focus on three high-growth regions: Texas, Washington State, and Northern Virginia plus Washington, D.C.

- The initial outreach targets 196 companies, with reporting requested for at least one facility per company.

- Data fields include electricity consumption, energy sources, server-related metrics, and cooling system details.

- The pilots are voluntary, while the post-pilot plan points toward a broader reporting framework developed after the pilot period.

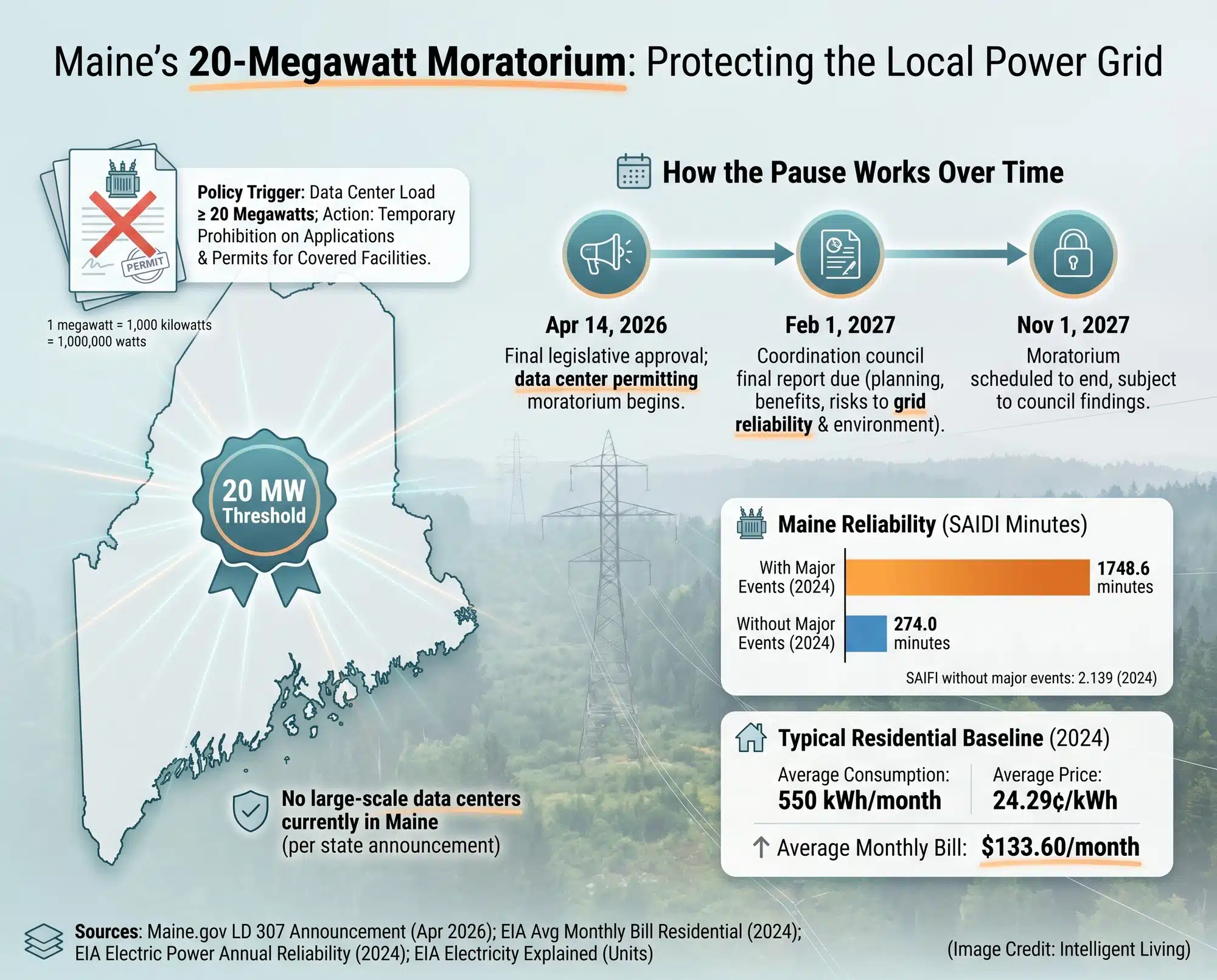

- Maine moved to pause permitting for new facilities above a 20-megawatt load threshold while state impact studies are completed.

- The pilots are designed to create a standardized baseline so utilities and regulators can compare loads that otherwise get described in incompatible ways.

Taken together, these points explain why “AI data center electricity use” has become a kitchen-table topic in places far from Silicon Valley. They also help readers track the timeline without confusing voluntary surveys with future mandatory reporting.

What the EIA Wants to Measure and Why the Grid Cares

Transparency in Tech: What Federal Agencies Are Tracking at Data Sites

Grid failures aren’t caused by averages; they happen during sudden, localized peaks. Federal surveyors are now dissecting the exact drivers of demand: energy sources, server density, and the cooling systems running 24/7.

The Power Measurement Checklist: Tracking Servers, Cooling, and Local Supply

These surveys map a facility’s precise energy footprint. While raw consumption provides a starting point, details like server density reveal why two twin buildings can place vastly different loads on local substations. Utility planners rely on this data to decide if a new campus will force expensive transformer swaps, new substations, or multi-year delays for surrounding neighbors.

Measuring Hidden Power: How Behind-the-Meter Supply Impacts Local Grids

The pilots also probe how much power is produced on-site instead of flowing through the local utility meter.

Planners need to know exactly how much energy skips the grid entirely. This hidden supply, known as behind-the-meter power generation, often includes:

- On-site solar arrays that offset daytime peaks.

- Massive battery systems designed for instant backup.

- Industrial generators that kick in during regional outages.

Understanding these internal power sources helps local utilities predict how a facility will behave when the rest of the neighborhood is under strain. Community members often notice these massive loads only when they hear the roar of industrial backup generators during a local outage, revealing the heavy infrastructure hidden behind the server racks.

Server Utilization Simplified: Connecting Computing Intensity to Local Energy Use

Don’t let technical terms like ‘server utilization’ confuse you. It’s simply the difference between a data center that sips power like a quiet grocery store and one that gulps energy like a packed stadium on game day.

Cooling and water choices also reshape energy overhead, because sustainable thermal management often comes down to how heat is moved, where evaporation happens, and whether the location is already water-stressed.

Grid Reliability and Your Wallet: Connecting Data Centers to Monthly Utility Costs

Large data centers behave like industrial loads that do not sleep. Accelerating load growth on U.S. grids has pushed data center policy out of technical circles and into public utility planning sessions where ratepayer costs are decided. The scale issue shows up in accelerating power demand, which explains why the story has moved from tech headlines into utility planning meetings.

Ratepayer Protection: How Large Power Loads Impact Local Utility Pricing

Standard grid maintenance cannot account for the sudden arrival of a 20-megawatt campus. These projects transform routine utility planning into high-stakes negotiations over infrastructure funding and local rate hikes. That is why standardized reporting has become a fairness tool. When loads are measured consistently, regulators can separate legitimate infrastructure needs from vague fear, and communities can negotiate timelines and contributions using comparable numbers.

Global AI Trends and Local Grid Impact: Forecasting Future Power Demands

The global trajectory matters, too, because AI infrastructure is not a local fad. The latest global energy demand forecasts predict a surge in power needs that turns technical engineering puzzles into urgent public policy debates.

Forecasting alters local permitting strategy. A town expecting a wave of future proposals will scrutinize an initial project’s grid impact much more strictly than if it were an isolated build.

Reducing Grid Strain: How Chip Efficiency and Software Optimization Help

Efficiency is the invisible hero of grid stability. When new chip architectures or compressed algorithms slash the energy needed for every calculation, the strain on local utilities vanishes silently. Industry leaders are pivoting toward lower-precision computing, using smaller data formats to squeeze more performance out of every watt without sacrificing accuracy.

Where the Legislative Record Matters

A moratorium lives or dies on its wording. The Maine legislative record captures the text, amendments, and status changes that determine what counts as a covered facility and how exceptions, if any, are defined.

Maine’s 20-Megawatt Moratorium: Protecting the Local Power Grid

Why Maine Hit Pause on 20-Megawatt Facilities

The statewide pause on new 20‐megawatt data centers pushed the issue into the open: slow down the biggest new facilities long enough to understand grid impacts before permits become automatic.

Understanding the Moratorium: Load Thresholds and Key Implementation Dates

Rules for this temporary permit freeze are detailed in the state’s pause-and-study framework. It stops all permits for facilities pulling 20 megawatts or more until at least late 2027.

Collaborative Oversight: The Role of Maine’s Data Center Coordination Council

The same framework calls for a coordination council and a report back to lawmakers by Feb. 1, 2027, aimed at weighing potential benefits against ratepayer and grid risks. In plain terms, it is a structured pause that forces planning to happen before approvals become routine.

The Future of AI Infrastructure: Trends and Reporting Rules to Watch

The near-term outcome is better visibility. Once data is standardized, grid planners can model demand more accurately, regulators can compare projects on similar terms, and communities can negotiate with numbers instead of guesses.

National Energy Disclosures: Moving from Voluntary Pilots to Mandatory Reporting

Federal agencies are codifying a national reporting standard, transitioning from voluntary pilots to mandatory disclosures. This framework provides grid operators with the precise data needed to safeguard regional energy reliability.

Sustainable Tech Solutions: Using Cooling and Water Management to Limit Grid Stress

Cooling is the primary pressure point for local grids. Operators are fighting back with high-efficiency cooling strategies that slash water and power waste:

- Closed-loop designs that recycle water.

- Liquid cooling that moves heat more efficiently than air.

- Heat reuse systems that warm nearby buildings.

- Reporting practices that make these environmental tradeoffs visible to the public.

Smart Infrastructure: Carbon-Aware Scheduling and Local Heat Integration

Another lever is timing. As operators adopt carbon-aware computing, heavy workloads can be shifted toward cleaner hours or cleaner regions, reducing peak strain without changing the services people rely on.

Grid failures feel personal during a heat wave. When data centers gulp extra power for cooling, they can push the grid to its breaking point just as families are turning up their own air conditioning. Some operators choose unusual siting choices that reduce cooling demand and build city-scale setups where data center waste heat supports district heating. On the engineering side, advancements in immersion cooling technology aim to move heat more efficiently than traditional air-based systems.

The New Era of AI Energy Accountability and Grid Transparency

We are moving past the era of guesswork. Federal and state leaders are finally forcing data centers to prove their efficiency through standardized reporting. As more communities begin to treat data centers like high-intensity industrial loads with real grid impacts, the conversation is moving away from tech hype and toward the practical realities of engineering, substation capacity, and reliability guarantees. Standardizing these numbers allows local utilities to plan for growth without forcing families to shoulder the financial burden of massive infrastructure upgrades.

In the coming years, the location of computing will likely matter just as much as the code itself. Some regions are already finding that edge AI processing significantly cuts cloud energy use by utilizing local grid capacity. Whether through waste heat reuse or carbon-aware workload scheduling, the industry is entering an era where energy efficiency is no longer a back-end detail—it is the foundation of how AI will scale in our communities.

Frequently Asked Questions About AI Power Use and Energy Costs

Are the EIA Data Center Surveys Mandatory Right Now?

No. The current effort is a voluntary pilot designed to establish a baseline for measurement before any broader national reporting rules are finalized.

What Information is the Government Tracking in These Pilots?

The EIA is gathering data on total electricity consumption, specific energy sources, server density metrics, and the cooling systems that shape local load patterns.

What Does a 20-Megawatt Load Mean for Local Communities?

In terms of grid power measurement, a 20-megawatt facility represents a massive, steady draw that often requires expensive upgrades to local transformers and substations.

Could a new data center in my town make my electricity bill go up?

Not necessarily. The risk of price hikes is highest when grid upgrades are rushed; standardized reporting helps regulators allocate costs fairly between tech companies and residents.

How Can Data Centers Reduce Their Impact on the Power Grid?

Efficiency improvements like liquid cooling, heat reuse, and carbon-aware scheduling allow operators to perform more computing tasks with significantly less power overhead.